[ad_1]

Google, eBay, and others have the flexibility to seek out “related” photographs. Have you ever ever questioned how this works? This functionality transcends what’s doable with bizarre key phrase search and as a substitute makes use of semantic search to return related or associated photographs. This weblog will cowl a quick historical past of semantic search, its use of vectors, and the way it differs from key phrase search.

Growing Understanding with Semantic Search

Conventional textual content search embodies a basic limitation: precise matching. All it could actually do is to verify, at scale, whether or not a question matches some textual content. Larger-end engines skate round this drawback with further tips like lemmatization and stemming, for instance equivalently matching “ship”, “despatched”, or “sending”, however when a selected question expresses an idea with a special phrase than the corpus (the set of paperwork to be searched), queries fail and customers get pissed off. To place it one other method, the search engine has no understanding of the corpus.

Our brains simply don’t work like engines like google. We expect in ideas and concepts. Over a lifetime we steadily assemble a psychological mannequin of the world, all of the whereas developing an inner panorama of ideas, details, notions, abstractions, and an internet of connections amongst them. Since associated ideas dwell “close by” on this panorama, it’s easy to recall one thing with a different-but-related phrase that also maps to the identical idea.

Whereas synthetic intelligence analysis stays removed from replicating human intelligence, it has produced helpful insights that make it doable to carry out search at the next, or semantic degree, matching ideas as a substitute of key phrases. Vectors, and vector search, are on the coronary heart of this revolution.

From Key phrases to Vectors

A typical knowledge construction for textual content search is a reverse index, which works very like the index in the back of a printed ebook. For every related key phrase, the index retains an inventory of occurrences particularly paperwork from the corpus; then resolving a question entails manipulating these lists to compute a ranked listing of matching paperwork.

In distinction, vector search makes use of a radically completely different method of representing objects: vectors. Discover that the previous sentence modified from speaking about textual content to a extra generic time period, objects. We’ll get again to that momentarily.

What’s a vector? Merely an inventory or array of numbers–think, java.util.Vector for instance—however with emphasis on its mathematical properties. Among the many helpful properties of vectors, often known as embeddings, is that they kind an area the place semantically related objects are shut to one another.

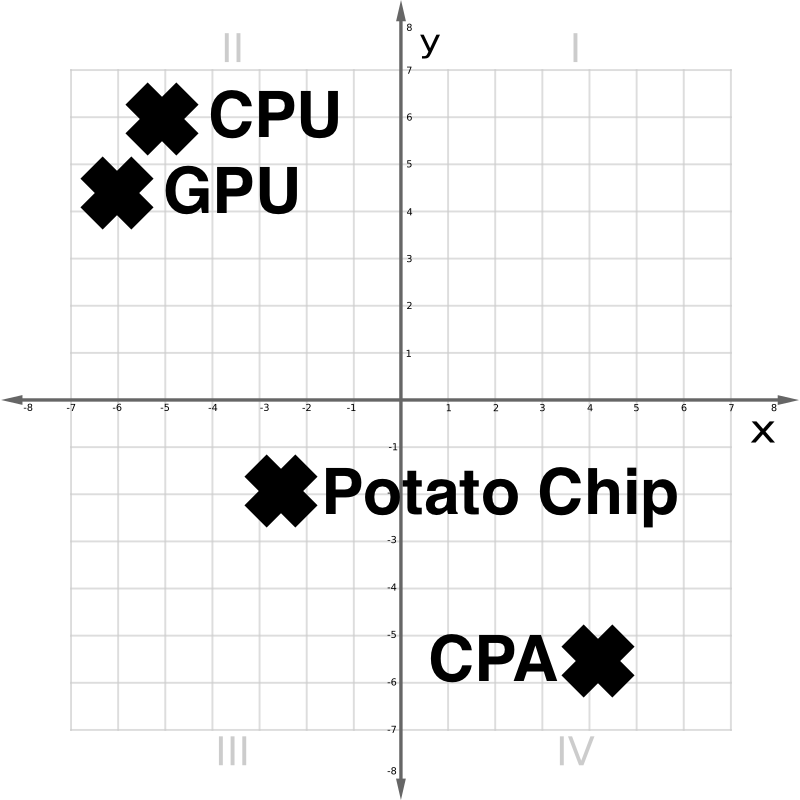

Within the vector area in Determine 1 above, we see {that a} CPU and a GPU are conceptually shut. A Potato Chip is distantly associated. A CPA, or accountant, although lexically much like a CPU, is sort of completely different.

The complete story of vectors requires a quick journey by way of a land of neural networks, embeddings, and 1000’s of dimensions.

Neural Networks and Embeddings

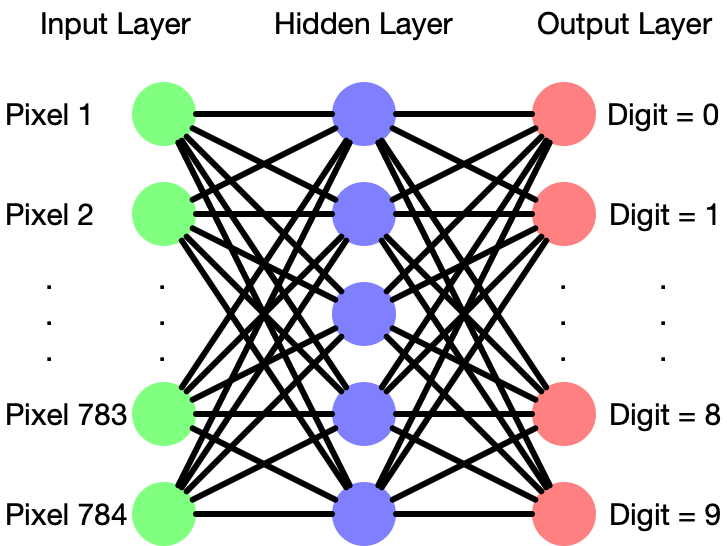

Articles abound describing the speculation and operation of neural networks, that are loosely modeled on how organic neurons interconnect. This part will give a fast refresher. Schematically a neural web seems to be like Determine 2:

A neural community consists of layers of ‘neurons’ every of which accepts a number of inputs with weights, both additive or multiplicative, which it combines into an output sign. The configuration of layers in a neural community varies fairly a bit between completely different purposes, and crafting simply the suitable “hyperparameters” for a neural web requires a talented hand.

One ceremony of passage for machine studying college students is to construct a neural web to acknowledge handwritten digits from a dataset known as MNIST, which has labeled photographs of handwritten digits, every 28×28 pixels. On this case, the leftmost layer would want 28×28=784 neurons, one receiving a brightness sign from every pixel. A center “hidden layer” has a dense net of connections to the primary layer. Normally neural nets have many hidden layers, however right here there’s just one. Within the MNIST instance, the output layer would have 10 neurons, representing what the community “sees,” specifically chances of digits 0-9.

Initially, the community is actually random. Coaching the community entails repeatedly tweaking the weights to be a tiny bit extra correct. For instance, a crisp picture of an “8” ought to mild up the #8 output at 1.0, leaving the opposite 9 all at 0. To the extent this isn’t the case, that is thought-about an error, which may be mathematically quantified. With some intelligent math, it’s doable to work backward from the output, nudging weights to cut back the general error in a course of known as backpropagation. Coaching a neural community is an optimization drawback, discovering an appropriate needle in an enormous haystack.

The pixel inputs and digit outputs all have apparent which means. However after coaching, what do the hidden layers symbolize? It is a good query!

Within the MNIST case, for some educated networks, a selected neuron or group of neurons in a hidden layer would possibly symbolize an idea like maybe “the enter comprises a vertical stroke” or “the enter comprises a closed loop”. With none specific steerage, the coaching course of constructs an optimized mannequin of its enter area. Extracting this from the community yields an embedding.

Textual content Vectors, and Extra

What occurs if we practice a neural community on textual content?

One of many first initiatives to popularize phrase vectors is known as word2vec. It trains a neural community with a hidden layer of between 100 and 1000 neurons, producing a phrase embedding.

On this embedding area, associated phrases are shut to one another. However even richer semantic relationships are expressible as but extra vectors. For instance, the vector between the phrases KING and PRINCE is sort of the identical because the vector between QUEEN and PRINCESS. Primary vector addition expresses semantic points of language that didn’t should be explicitly taught.

Surprisingly, these methods work not solely on single phrases, but in addition for sentences and even complete paragraphs. Completely different languages will encode in a method that comparable phrases are shut to one another within the embedding area.

Analogous methods work on photographs, audio, video, analytics knowledge, and the rest {that a} neural community may be educated on. Some “multimodal” embeddings permit, for instance, photographs and textual content to share the identical embedding area. An image of a canine would find yourself near the textual content “canine”. This appears like magic. Queries may be mapped to the embedding area, and close by vectors—whether or not they symbolize textual content, knowledge, or the rest–will map to related content material.

Some Makes use of for Vector Search

Due to its shared ancestry with LLMs and neural networks, vector search is a pure slot in generative AI purposes, usually offering exterior retrieval for the AI. Among the foremost makes use of for these sorts of use circumstances are:

- Including ‘reminiscence’ to a LLM past the restricted context window dimension

- A chatbot that shortly finds probably the most related sections of paperwork in your company community, and arms them off to a LLM for summarization or as solutions to Q&A. (That is known as Retrieval Augmented Era)

Moreover, vector search works nice in areas the place the search expertise must work extra intently to how we expect, particularly for grouping related objects, equivalent to:

- Search throughout paperwork in a number of languages

- Discovering visually related photographs, or photographs much like movies.

- Fraud or anomaly detection, as an illustration if a selected transaction/doc/e mail produces an embedding that’s farther away from a cluster of extra typical examples.

- Hybrid search purposes, utilizing each conventional search engine expertise in addition to vector search to mix the strengths of every.

In the meantime, conventional key phrase primarily based search nonetheless has its strengths, and stays helpful for a lot of apps, particularly the place a consumer is aware of precisely what they’re in search of, together with structured knowledge, linguistic evaluation, authorized discovery, and faceted or parametric search.

However that is solely a small style of what’s doable. Vector search is hovering in reputation, and powering increasingly more purposes. How will your subsequent challenge use vector search?

Proceed your studying with half 2 of our Introduction to Semantic Search: –Embeddings, Similarity Metrics and Vector Databases.

Find out how Rockset helps vector search right here.

[ad_2]