[ad_1]

We’re excited to announce the Basic Availability of serverless compute for notebooks, jobs and Delta Stay Tables (DLT) on AWS and Azure. Databricks clients already get pleasure from quick, easy and dependable serverless compute for Databricks SQL and Databricks Mannequin Serving. The identical functionality is now out there for all ETL workloads on the Information Intelligence Platform, together with Apache Spark and Delta Stay Tables. You write the code and Databricks gives fast workload startup, computerized infrastructure scaling and seamless model upgrades of the Databricks Runtime. Importantly, with serverless compute you might be solely billed for work finished as an alternative of time spent buying and initializing situations from cloud suppliers.

Our present serverless compute providing is optimized for quick startup, scaling, and efficiency. Customers will quickly be capable to categorical different objectives comparable to decrease value. We’re at the moment providing an introductory promotional low cost on serverless compute, out there now till October 31, 2024. You get a 50% worth discount on serverless compute for Workflows and DLT and a 30% worth discount for Notebooks.

“Cluster startup is a precedence for us, and serverless Notebooks and Workflows have made an enormous distinction. Serverless compute for notebooks make it straightforward with only a single click on; we get serverless compute that seamlessly integrates into workflows. Plus, it is safe. This long-awaited function is a game-changer. Thanks, Databricks!”

— Chiranjeevi Katta, Information Engineer, Airbus

Let’s discover the challenges serverless compute helps remedy and the distinctive advantages it presents information groups.

Compute infrastructure is advanced and dear to handle

Configuring and managing compute comparable to Spark clusters has lengthy been a problem for information engineers and information scientists. Time spent on configuring and managing compute is time not spent offering worth to the enterprise.

Selecting the best occasion kind and measurement is time-consuming and requires experimentation to find out the optimum alternative for a given workload. Determining cluster insurance policies, auto-scaling, and Spark configurations additional complicates this job and requires experience. When you get clusters arrange and working, you continue to need to spend time sustaining and tuning their efficiency and updating Databricks Runtime variations so you’ll be able to profit from new capabilities.

Idle time – time not spent processing your workloads, however that you’re nonetheless paying for – is one other pricey final result of managing your personal compute infrastructure. Throughout compute initialization and scale-up, situations must boot up, software program together with Databricks Runtime must be put in, and many others. You pay your cloud supplier for this time. Second, when you over-provision compute by utilizing too many situations or occasion varieties which have an excessive amount of reminiscence, CPU, and many others., compute will probably be under-utilized but you’ll nonetheless pay for the entire provisioned compute capability.

Observing this value and complexity throughout tens of millions of buyer workloads led us to innovate with serverless compute.

Serverless compute is quick, easy and dependable

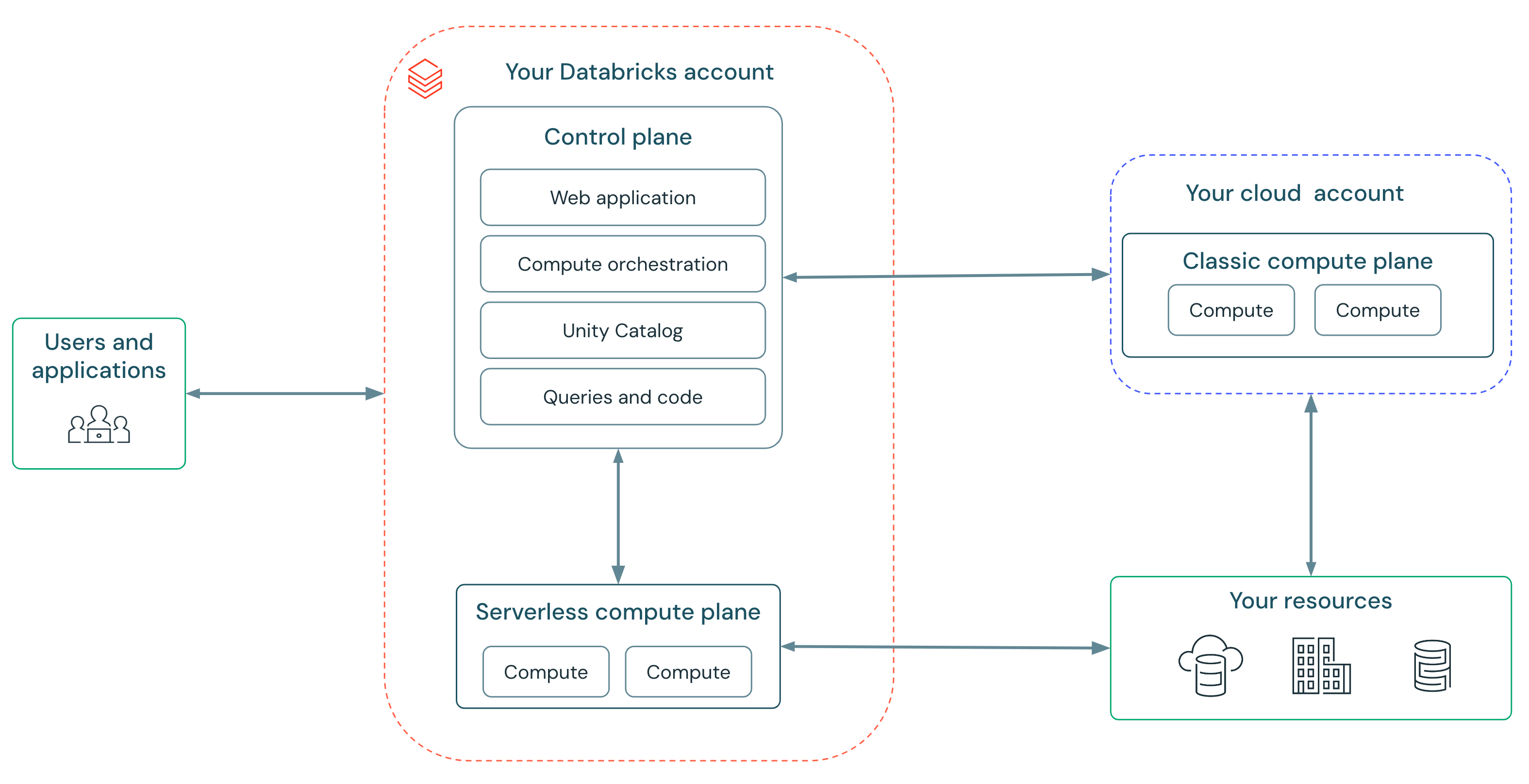

In basic compute, you give Databricks delegated permission by way of advanced cloud insurance policies and roles to handle the lifecycle of situations wanted in your workloads. Serverless compute removes this complexity since Databricks manages an unlimited, safe fleet of compute in your behalf. You possibly can simply begin utilizing Databricks with none setup.

Serverless compute allows us to offer a service that’s quick, easy, and dependable:

- Quick: No extra ready for clusters — compute begins up in seconds, not minutes. Databricks runs “heat swimming pools” of situations in order that compute is prepared when you’re.

- Easy: No extra selecting occasion varieties, cluster scaling parameters, or setting Spark configs. Serverless features a new autoscaler which is smarter and extra attentive to your workload’s wants than the autoscaler in basic compute. Which means each consumer is now capable of run workloads with out hand-holding of infrastructure consultants. Databricks updates workloads mechanically and safely improve to the most recent Spark variations — guaranteeing you all the time get the most recent efficiency and safety advantages.

- Dependable: Databricks’ serverless compute shields clients from cloud outages with computerized occasion kind failover and a “heat pool” of situations buffering from availability shortages.

“It is very straightforward to maneuver workflows from Dev to Prod with out the necessity to decide on employee varieties. [The] vital enchancment in start-up time, mixed with diminished DataOps configuration and upkeep, significantly enhances productiveness and effectivity.”

— Gal Doron, Head of Information, AnyClip

Serverless compute payments for work finished

We’re excited to introduce an elastic billing mannequin for serverless compute. You’re billed solely when compute is assigned to your workloads and never for the time to accumulate and arrange compute situations.

The clever serverless autoscaler ensures that your workspace will all the time have the correct quantity of capability provisioned so we will reply to demand e.g., when a consumer runs a command in a pocket book. It’ll mechanically scale workspace capability up and down in graduated steps to fulfill your wants. To make sure assets are managed properly, we’ll scale back provisioned capability after a couple of minutes when the clever autoscaler predicts it’s now not wanted.

“Serverless compute for DLT was extremely straightforward to arrange and get working, and we’re already seeing main efficiency enhancements from our materialized views. Traditionally going from uncooked information to the silver layer took us about 16 minutes, however after switching to serverless, it is solely about 7 minutes. The time and value financial savings are going to be immense”

— Aaron Jespen, Director IT Operations, Jetlinx

Serverless compute is simple to handle

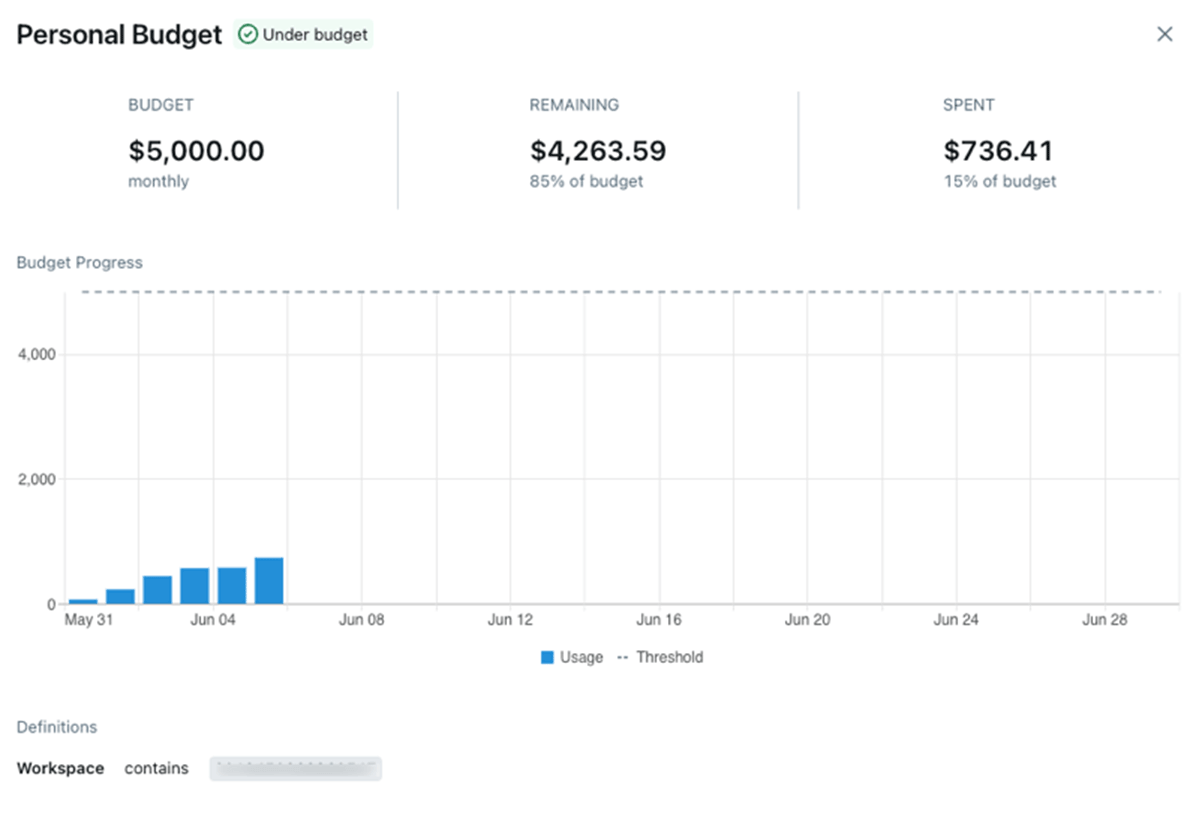

Serverless compute contains instruments for directors to handle prices and budgets. In spite of everything, simplicity mustn’t imply price range overruns and stunning payments!

Information in regards to the utilization and prices of serverless compute is on the market in system tables. We offer pre-built dashboards that allow you to get an summary of prices and drill down into particular workloads.

Directors can use price range alerts (Preview) to group prices and arrange alerts. There’s a pleasant UI for managing budgets.

Serverless compute is designed for contemporary Spark workloads

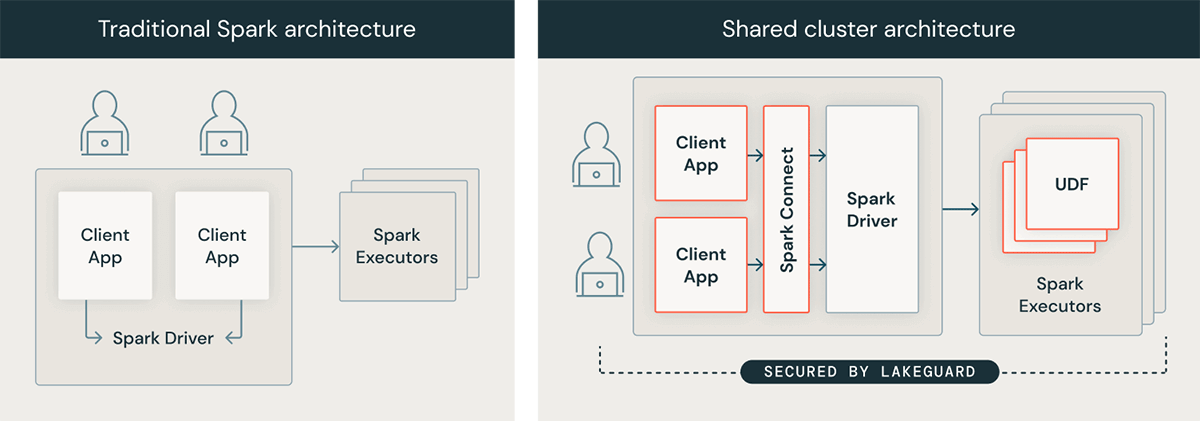

Underneath the hood, serverless compute makes use of Lakeguard to isolate consumer code utilizing sandboxing strategies, an absolute necessity in a serverless surroundings. Because of this, some workloads require code modifications to proceed engaged on serverless. Serverless compute requires Unity Catalog for safe entry to information belongings, therefore workloads that entry information with out utilizing Unity Catalog might have modifications.

The best solution to check in case your workload is prepared for serverless compute is to first run it on a basic cluster utilizing shared entry mode on DBR 14.3+.

Serverless compute is able to use

We’re arduous at work to make serverless compute even higher within the coming months:

- GCP help: We at the moment are starting a personal preview on serverless compute on GCP; keep tuned for our public preview and GA bulletins.

- Personal networking and egress controls: Connect with assets inside your non-public community, and management what your serverless compute assets can entry on the general public Web.

- Enforceable attribution: Make sure that all notebooks, workflows, and DLT pipelines are appropriately tagged so as to assign value to particular value facilities, e.g. for chargebacks.

- Environments: Admins will be capable to set a base surroundings for the workspace with entry to non-public repositories, particular Python and library variations, and surroundings variables.

- Value vs. efficiency: Serverless compute is at the moment optimized for quick startup, scaling, and efficiency. Customers will quickly be capable to categorical different objectives comparable to decrease value.

- Scala help: Customers will be capable to run Scala workloads on serverless compute. To get able to easily transfer to serverless as soon as out there, transfer your Scala workloads to basic compute with Shared Entry mode.

To start out utilizing serverless compute at this time:

[ad_2]