[ad_1]

Customers work together with providers in real-time. They login to web sites, like and share posts, buy items and even converse, all in real-time. So why is it that every time you will have an issue when utilizing a service and also you attain a buyer help consultant, that they by no means appear to know who you might be or what you’ve been doing just lately?

That is possible as a result of they haven’t constructed a buyer 360 profile and if they’ve, it actually isn’t real-time. Right here, real-time means throughout the final couple of minutes if not seconds. Understanding all the pieces the client has simply accomplished previous to contacting buyer help offers the staff the perfect probability to know and remedy the client’s drawback.

That is why a real-time Buyer 360 profile is extra helpful than a batch, information warehouse generated profile, as I need to preserve the latency low, which isn’t possible with a conventional information warehouse. On this publish, I’ll stroll by means of what a buyer 360 profile is and tips on how to construct one which updates in real-time.

What Is a Buyer 360 Profile?

The aim of a buyer 360 profile is to supply a holistic view of a buyer. The aim is to take information from all of the disparate providers that the client interacts with, regarding a services or products. This information is introduced collectively, aggregated after which typically displayed through a dashboard to be used by the client help staff.

When a buyer calls the help staff or makes use of on-line chat, the staff can rapidly perceive who the client is and what they’ve accomplished just lately. This removes the necessity for the tedious, script-read questions, which means the staff can get straight to fixing the issue.

With this information multi function place, it might then be used downstream for predictive analytics and real-time segmentation. This can be utilized to supply extra well timed and related advertising and front-end personalisation, enhancing the client expertise.

Use Case: Vogue Retail Retailer

To assist display the facility of a buyer 360, I’ll be utilizing a nationwide Vogue Retail Model for instance. This model has a number of shops throughout the nation and a web site permitting clients to order objects for supply or retailer decide up.

The model has a buyer help centre that offers with buyer enquiries about orders, deliveries, returns and fraud. What they want is a buyer 360 dashboard in order that when a buyer contacts them with a problem, they’ll see the newest buyer particulars and exercise in real-time.

The information sources out there embrace:

- customers (MongoDB): Core buyer information equivalent to identify, age, gender, tackle.

- online_orders (MongoDB): On-line buy information together with product particulars and supply addresses.

- instore_orders (MongoDB): In-store buy information once more together with product particulars and retailer location.

- marketing_emails (Kafka): Advertising information together with objects despatched and any interactions.

- weblogs (Kafka): Web site analytics equivalent to logins, shopping, purchases and any errors.

In the remainder of the publish, utilizing these information sources, I’ll present you tips on how to consolidate all of this information into one place in real-time and floor it to a dashboard.

Platform Structure

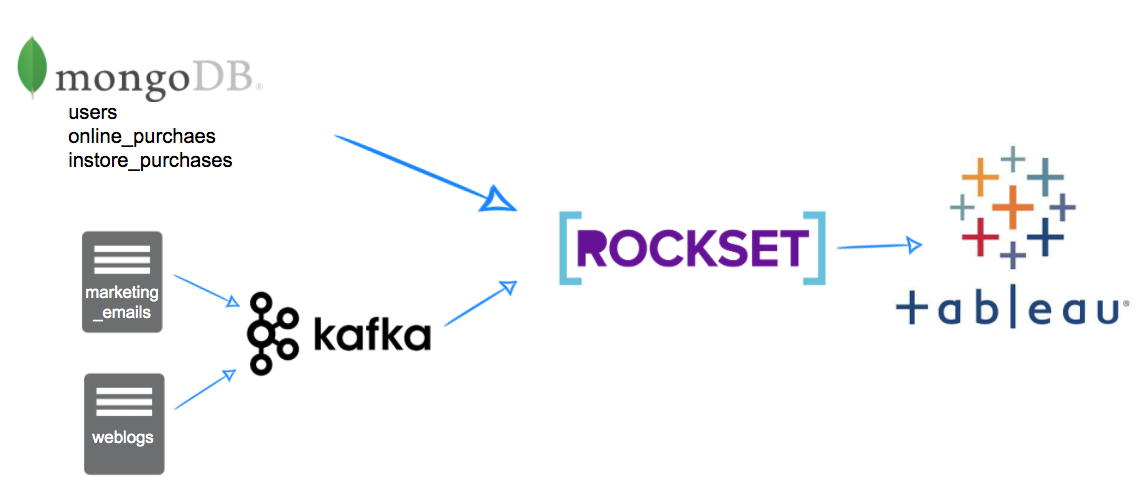

Step one in constructing a buyer 360 is consolidating the completely different information sources into one place. Because of the real-time necessities of our vogue model, we’d like a technique to preserve the info sources in sync in real-time and in addition enable speedy retrieval and analytics on this information so it may be introduced again in a dashboard.

For this, we’ll use Rockset. The shopper and buy information is at the moment saved in a MongoDB database which might merely be replicated into Rockset utilizing a built-in connector. To get the weblogs and advertising information I’ll use Kafka, as Rockset can then devour these messages as they’re generated.

What we’re left with is a platform structure as proven in Fig 1. With information flowing by means of to Rockset in real-time, we are able to then show the client 360 dashboards utilizing Tableau. This method permits us to see buyer interactions in the previous few minutes and even seconds on our dashboard. A conventional information warehouse method would considerably enhance this latency as a result of batch information pipelines ingesting the info. Rockset can keep information latency within the 1-2 second vary when connecting to an OLTP database or to information streams.

Fig 1. Platform structure diagram

I’ve written posts on integrating Kafka subjects into Rockset and in addition utilising the MongoDB connector that go into extra element on tips on how to set these integrations up. On this publish, I’m going to pay attention extra on the analytical queries and dashboard constructing and assume this information is already being synced into Rockset.

Constructing the Dashboard with Tableau

The very first thing we have to do is get Tableau speaking to Rockset utilizing the Rockset documentation. That is pretty easy and solely requires downloading a JDBC connector and placing it within the appropriate folder, then producing an API key inside your Rockset console that will likely be used to attach in Tableau.

As soon as accomplished, we are able to now work on constructing our SQL statements to supply us with all the info we’d like for our Dashboard. I like to recommend constructing this within the Rockset console and transferring it over to Tableau afterward. It will give us better management over the statements which might be submitted to Rockset for higher efficiency.

First, let’s break down what we would like our dashboard to point out:

- Primary buyer particulars together with first identify, final identify

- Buy stats together with variety of on-line and in-store purchases, hottest objects purchased and quantity spent all time

- Current exercise stats together with final buy dates final login, final web site go to and final electronic mail interplay

- Particulars about most up-to-date errors while shopping on-line

Now we are able to work on the SQL for bringing all of those properties collectively.

1. Primary Buyer Particulars

This one is simple, only a easy SELECT from the customers assortment (replicated from MongoDB).

SELECT customers.id as customer_id,

customers.first_name,

customers.last_name,

customers.gender,

DATE_DIFF('yr',CAST(dob as date), CURRENT_DATE()) as age

FROM vogue.customers

2. Buy Statistics

First, we need to get all the online_purchases statistics. Once more, this information has been replicated by Rockset’s MongoDB integration. Merely counting the variety of orders and variety of objects and in addition dividing one by the opposite to get an thought of things per order.

SELECT *

FROM (SELECT 'On-line' AS "sort",

on-line.user_id AS customer_id,

COUNT(DISTINCT ON line._id) AS number_of_online_orders,

COUNT(*) AS number_of_items_purchased,

COUNT(*) / COUNT(DISTINCT ON line._id) AS items_per_order

FROM vogue.online_orders on-line,

UNNEST(on-line."objects")

GROUP BY 1,

2) on-line

UNION ALL

(SELECT 'Instore' AS "sort",

instore.user_id AS customer_id,

COUNT(DISTINCT instore._id) AS number_of_instore_orders,

COUNT(*) AS number_of_items_purchased,

COUNT(*) / COUNT(DISTINCT instore._id) AS items_per_order

FROM vogue.instore_orders instore,

UNNEST(instore."objects")

GROUP BY 1,

2)

We will then replicate this for the instore_orders and union the 2 statements collectively.

3. Most Standard Objects

We now need to perceive the most well-liked objects bought by every person. This one merely calculates a depend of merchandise by person. To do that we have to unnest the objects, this offers us one row per order merchandise prepared for counting.

SELECT online_orders.user_id AS "Buyer ID",

UPPER(basket.product_name) AS "Product Identify",

COUNT(*) AS "Purchases"

FROM vogue.online_orders,

UNNEST(online_orders."objects") AS basket

GROUP BY 1,

2

4. Current Exercise

For this, we’ll use all tables and get the final time the person did something on the platform. This encompasses the customers, instore_orders and online_orders information sources from MongoDB alongside the weblogs and marketing_emails information streamed in from Kafka. A barely longer question as we’re getting the max date for every occasion sort and unioning them collectively, however as soon as in Rockset it’s trivial to mix these information units.

SELECT occasion,

user_id AS customer_id,

"date"

FROM (SELECT 'Instore Order' AS occasion,

user_id,

CAST(MAX(DATE) AS datetime) "date"

FROM vogue.instore_orders

GROUP BY 1,

2) x

UNION

(SELECT 'On-line Order' AS occasion,

user_id,

CAST(MAX(DATE) AS datetime) last_online_purchase_date

FROM vogue.online_orders

GROUP BY 1,

2)

UNION

(SELECT 'Electronic mail Despatched' AS occasion,

user_id,

CAST(MAX(DATE) AS datetime) AS last_email_date

FROM vogue.marketing_emails

GROUP BY 1,

2)

UNION

(SELECT 'Electronic mail Opened' AS occasion,

user_id,

CAST(MAX(CASE WHEN email_opened THEN DATE ELSE NULL END) AS datetime) AS last_email_opened_date

FROM vogue.marketing_emails

GROUP BY 1,

2)

UNION

(SELECT 'Electronic mail Clicked' AS occasion,

user_id,

CAST(MAX(CASE WHEN email_clicked THEN DATE ELSE NULL END) AS datetime) AS last_email_clicked_date

FROM vogue.marketing_emails

GROUP BY 1,

2)

UNION

(SELECT 'Web site Go to' AS occasion,

user_id,

CAST(MAX(DATE) AS datetime) AS last_website_visit_date

FROM vogue.weblogs

GROUP BY 1,

2)

UNION

(SELECT 'Web site Login' AS occasion,

user_id,

CAST(MAX(CASE WHEN weblogs.web page="login_success.html" THEN DATE ELSE NULL END) AS datetime) AS last_website_login_date

FROM vogue.weblogs

GROUP BY 1,

2)

5. Current Errors

One other easy question to get the web page the person was on, the error message and the final time it occurred utilizing the weblogs dataset from Kafka.

SELECT customers.id AS "Buyer ID",

weblogs.error_message AS "Error Message",

weblogs.web page AS "Web page Identify",

MAX(weblogs.date) AS "Date"

FROM vogue.customers

LEFT JOIN vogue.weblogs ON weblogs.user_id = customers.id

WHERE weblogs.error_message IS NOT NULL

GROUP BY 1,

2,

3

Making a Dashboard

Now we need to pull all of those SQL queries right into a Tableau workbook. I discover it finest to create a knowledge supply and worksheet per part after which create a dashboard to tie all of them collectively.

In Tableau, I constructed 6 worksheets, one for every of the SQL statements above. The worksheets every show the info merely and intuitively. The concept is that these 6 worksheets can then be mixed right into a dashboard that permits the customer support member to seek for a buyer and show a 360 view.

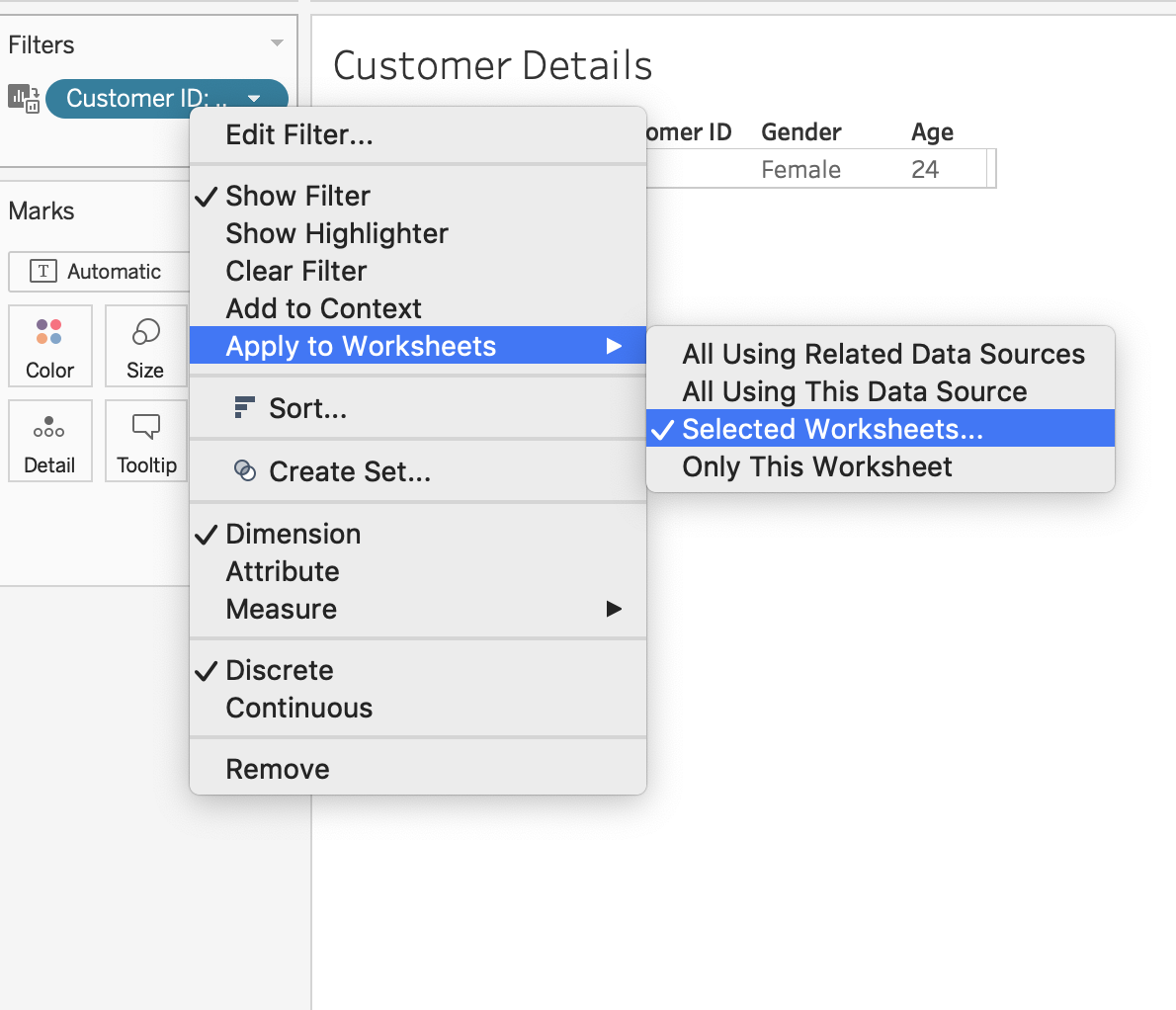

To do that in Tableau, we’d like the filtering column to have the identical identify throughout all of the sheets; I known as mine “Buyer ID”. You’ll be able to then right-click on the filter and apply to chose worksheets as proven in Fig 2.

Fig 2. Making use of a filter to a number of worksheets in Tableau

It will convey up a listing of all worksheets that Tableau can apply this similar filter to. This will likely be useful when constructing our dashboard as we solely want to incorporate one search filter that can then be utilized to all of the worksheets. You have to identify the sphere the identical throughout all of your worksheets for this to work.

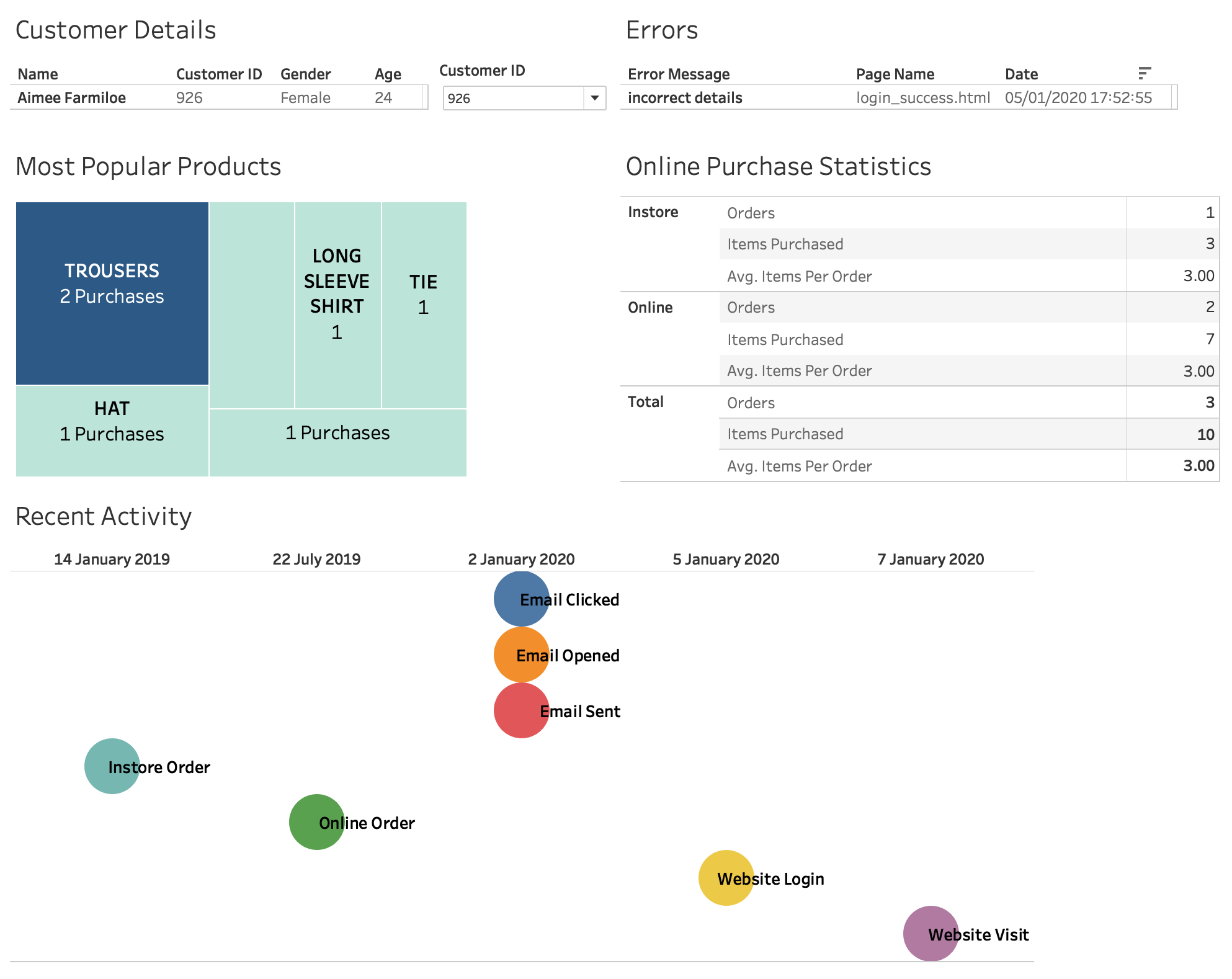

Fig 3 exhibits all the worksheets put collectively in a easy dashboard. All the information inside this dashboard is backed by Rockset and due to this fact reaps all of its advantages. That is why it’s necessary to make use of the SQL statements immediately in Tableau quite than creating inner Tableau information sources. In doing this, we ask Rockset to carry out the complicated analytics, which means the info might be crunched effectively. It additionally implies that any new information that’s synced into Rockset is made out there in real-time.

Fig 3. Tableau buyer 360 dashboard

If a buyer contacts help with a question, their newest exercise is instantly out there on this dashboard, together with their newest error message, buy historical past and electronic mail exercise. This permits the customer support member to know the client at a look and get straight to resolving their question, as an alternative of asking questions that they need to already know the reply to.

The dashboard offers an summary of the client’s particulars within the high left and any current errors within the high proper. In between is the filter/search functionality to pick out a buyer primarily based on who is asking. The subsequent part offers an at-a-glance view of the most well-liked merchandise bought by the client and their lifetime buy statistics. The ultimate part exhibits an exercise timeline displaying the newest interactions with the service throughout electronic mail, in-store and on-line channels.

Additional Potential

Constructing a buyer 360 profile doesn’t must cease at dashboards. Now you will have information flowing right into a single analytics platform, this similar information can be utilized to enhance buyer entrance finish expertise, present cohesive messaging throughout internet, cell and advertising and for predictive modelling.

Rocket’s in-built API means this information might be made accessible to the entrance finish. The web site can then use these profiles to personalise the entrance finish content material. For instance, a buyer’s favorite merchandise can be utilized to show these merchandise entrance and centre on the web site. This requires much less effort from the client, because it’s now possible that what they got here to your web site for is correct there on the primary web page.

The advertising system can use this information to make sure that emails are personalised in the identical method. Which means the client visits the web site and sees advisable merchandise that in addition they see in an electronic mail just a few days later. This not solely personalises their expertise however ensures it is cohesive throughout all channels.

Lastly, this information might be extraordinarily highly effective when used for predictive analytics. Understanding behaviour for all customers throughout all areas of a enterprise means patterns might be discovered and used to know possible future behaviour. This implies you might be now not reacting to actions, like displaying beforehand bought objects on the house web page, and you’ll as an alternative present anticipated future purchases.

Lewis Gavin has been a knowledge engineer for 5 years and has additionally been running a blog about abilities throughout the Information group for 4 years on a private weblog and Medium. Throughout his pc science diploma, he labored for the Airbus Helicopter staff in Munich enhancing simulator software program for navy helicopters. He then went on to work for Capgemini the place he helped the UK authorities transfer into the world of Massive Information. He’s at the moment utilizing this expertise to assist rework the info panorama at easyfundraising.org.uk, an internet charity cashback website, the place he’s serving to to form their information warehousing and reporting functionality from the bottom up.

[ad_2]