[ad_1]

In Half One, we mentioned methods to first establish gradual queries on MongoDB utilizing the database profiler, after which investigated what the methods the database took doing through the execution of these queries to grasp why our queries had been taking the time and assets that they had been taking. On this weblog put up, we’ll focus on a number of different focused methods that we are able to use to hurry up these problematic queries when the appropriate circumstances are current.

Avoiding Assortment Scans utilizing Consumer-Outlined Learn Indexes

When working at scale, most main manufacturing databases can not afford any assortment scans in any respect until the QPS could be very low or the gathering dimension itself is small. If you happen to discovered throughout your investigation in Half One which your queries are being slowed down by pointless assortment scans, it’s possible you’ll wish to think about using user-defined indexes in MongoDB.

Similar to relational databases, NoSQL databases like MongoDB additionally make the most of indexes to hurry up queries. Indexes retailer a small portion of every assortment’s knowledge set into separate traversable knowledge buildings. These indexes then allow your queries to carry out at quicker speeds by minimizing the variety of disk accesses required with every request.

When you recognize the queries forward of time that you just’re trying to velocity up, you’ll be able to create indexes from inside MongoDB on the fields which you want quicker entry to. With only a few easy instructions, MongoDB will mechanically kind these fields into separate entries to optimize your question lookups.

To create an index in MongoDB, merely use the next syntax:

db.assortment.createIndex( <key and index kind specification>, <choices> )

As an example, the next command would create a single discipline index on the sector shade:

db.assortment.createIndex( { shade: -1 } )

MongoDB affords a number of index varieties optimized for numerous question lookups and knowledge varieties:

- Single Subject Indexes are used to a index single discipline in a doc

- Compound Subject Indexes are used to index a number of fields in a doc

- Multikey Indexes are used to index the content material saved in arrays

- Geospatial Indexes are used to effectively index geospatial coordinate knowledge

- Textual content Indexes are used to effectively index string content material in a group

- Hashed Indexes are used to index the hash values of particular fields to help hash-based sharding

Whereas indexes can velocity up with sure queries tremendously, additionally they include tradeoffs. Indexes use reminiscence, and including too many will trigger the working set to not match inside reminiscence, which can truly tank the efficiency of the cluster. Thus, you at all times wish to make sure you’re indexing simply sufficient, however not an excessive amount of.

For extra particulars, you should definitely take a look at our different weblog put up on Indexing on MongoDB utilizing Rockset!

Avoiding Doc Scans Solely utilizing Lined Queries

If you happen to discovered throughout your investigation that your queries are scanning an unusually excessive variety of paperwork, it’s possible you’ll wish to look into whether or not or not a question may be glad with out scanning any paperwork in any respect utilizing index-only scan(s). When this happens, we are saying that the index has “lined” this question since we not have to do any extra work to finish this question. Such queries are generally known as lined queries, and are solely attainable if and provided that all of those two necessities are glad:

- Each discipline the question must entry is a part of an index

- Each discipline returned by this question is in the identical index

Moreover, MongoDB has the next restrictions which stop indexes from absolutely protecting queries:

- No discipline within the protecting index is an array

- No discipline within the protecting index is a sub-document

- The _id discipline can’t be returned by this question

As an example, let’s say now we have a group rocks which has a multikey index on two fields, shade and sort:

db.rocks.createIndex({ shade: 1, kind: 1 })

Then, if attempt to discover the sorts of rocks for a specific shade, that question could be “lined” by the above index:

db.customers.discover({ shade: "black" }, { kind: 1, _id: 0 })

Let’s take a deeper have a look at what the database is doing utilizing the EXPLAIN technique we realized about through the investigation part.

Utilizing a primary question with out a protecting index with a single doc, the next executionStats are returned:

"executionStats" : {

"executionSuccess" : true,

"nReturned" : 1,

"executionTimeMillis" : 0,

"totalKeysExamined" : 1,

"totalDocsExamined" : 1

}

Utilizing our lined question, nonetheless, the next executionStats are returned:

"executionStats" : {

"executionSuccess" : true,

"nReturned" : 1,

"executionTimeMillis" : 0,

"totalKeysExamined" : 1,

"totalDocsExamined" : 0

}

Word that the variety of paperwork scanned modified to 0 within the lined question – this efficiency enchancment was made attainable because of the index we created earlier which contained all the information we wanted (thereby “protecting” the question). Thus, MongoDB didn’t have to scan any assortment paperwork in any respect. Tweaking your indexes and queries to permit for such circumstances can considerably enhance question efficiency.

Avoiding Software-Stage JOINs utilizing Denormalization

NoSQL databases like MongoDB are sometimes structured with out a schema to make writes handy, and it’s a key half what additionally makes them so distinctive and common. Nevertheless, the shortage of a schema can dramatically slows down reads, inflicting issues with question efficiency as your software scales.

As an example, one of the crucial generally well-known drawbacks of utilizing a NoSQL database like MongoDB is the shortage of help for database-level JOINs. If any of your queries are becoming a member of knowledge throughout a number of collections in MongoDB, you’re seemingly doing it on the software degree. This, nonetheless, is tremendously expensive since you need to switch all the information from the tables concerned into your software earlier than you’ll be able to carry out the operation.

Growing Learn Efficiency by Denormalizing Your Information

When you find yourself storing relational knowledge in a number of collections in MongoDB which requires a number of queries to retrieve the information you want, you’ll be able to denormalize it to extend learn efficiency. Denormalization is the method by which we commerce write efficiency for learn efficiency by embedding knowledge from one assortment into one other, both by making a replica of sure fields or by shifting it totally.

As an example, let’s say you could have the next two collections for workers and corporations:

{

"e mail" : "[email protected]",

"identify" : "John Smith",

"firm" : "Google"

},

{

"e mail" : "[email protected]",

"identify" : "Mary Adams",

"firm" : "Microsoft"

},

...

{

"identify" : "Google",

"inventory" : "GOOGL",

"location" : "Mountain View, CA"

},

{

"identify" : "Microsoft",

"inventory" : "MSFT",

"location" : "Redmond, WA"

},

...

As a substitute of making an attempt to question the information from each collections utilizing an application-level JOIN, we are able to as an alternative embed the businesses assortment inside the staff assortment:

{

"e mail" : "[email protected]",

"identify" : "John Smith",

"firm" : {

"identify": "Google",

"inventory" : "GOOGL",

"location" : "Mountain View, CA"

}

},

{

"e mail" : "[email protected]",

"identify" : "Mary Adams",

"firm" : {

"identify" : "Microsoft",

"inventory" : "MSFT",

"location" : "Redmond, WA"

}

},

...

Now that every one of our knowledge is already saved in a single place, we are able to merely question the staff assortment a single time to retrieve every little thing we want, avoiding the necessity to do any JOINs totally.

As we famous earlier, whereas denormalizing your knowledge does enhance learn efficiency, it doesn’t come with out its drawbacks both. A right away disadvantage could be that we’re doubtlessly growing storage prices considerably by having to maintain a redundant copies of the information. In our earlier instance, each single worker would now have the total firm knowledge embedded inside its doc, inflicting an exponential enhance in storage dimension. Moreover, our write efficiency could be severely affected – as an illustration, if we wished to vary the situation discipline of an organization that moved its headquarters, we’d now need to undergo each single doc in our workers assortment to replace its firm’s location.

What about MongoDB’s $lookup operator?

To assist sort out its lack of help for JOINs, MongoDB added a brand new operator known as $lookup within the launch for MongoDB 3.2. The $lookup operator is an aggregation pipeline operator which performs a left outer be part of to an unsharded assortment in the identical database to filter in paperwork from the “joined” assortment for processing. The syntax is as follows:

{

$lookup:

{

from: <assortment to hitch>,

localField: <discipline from the enter paperwork>,

foreignField: <discipline from the paperwork of the "from" assortment>,

as: <output array discipline>

}

}

As an example, let’s check out our earlier instance once more for the 2 collections workers and corporations:

{

"e mail" : "[email protected]",

"identify" : "John Smith",

"firm" : "Google"

},

{

"e mail" : "[email protected]",

"identify" : "Mary Adams",

"firm" : "Microsoft"

},

...

{

"identify" : "Google",

"inventory" : "GOOGL",

"location" : "Mountain View, CA"

},

{

"identify" : "Microsoft",

"inventory" : "MSFT",

"location" : "Redmond, WA"

},

...

You may then run the next command to hitch the tables collectively:

db.workers.mixture([{

$lookup: {

from: "companies",

localField: "company",

foreignField: "name",

as: "employer"

}

}])

The question would return the next:

{

"e mail" : "[email protected]",

"identify" : "John Smith",

"firm" : "Google"

"employer": {

"identify" : "Microsoft",

"inventory" : "GOOGL",

"location" : "Mountain View, CA"

}

},

{

"e mail" : "[email protected]",

"identify" : "Mary Adams",

"firm" : "Microsoft"

"employer": {

"identify" : "Microsoft",

"inventory" : "MSFT",

"location" : "Redmond, WA"

}

},

...

Whereas this helps to alleviate a few of the ache of performing JOINs on MongoDB collections, it’s removed from an entire answer with some notoriously well-known drawbacks. Most notably, its efficiency is considerably worse than JOINs in SQL databases like Postgres, and nearly at all times requires an index to help every JOIN. As well as, even minor adjustments in your knowledge or aggregation necessities could cause you to need to closely rewrite the appliance logic.

Lastly, even at peak efficiency, the performance is just very restricted – the $lookup operator solely lets you carry out left outer joins, and can’t be used on sharded collections. It additionally can not work immediately with arrays, that means that you would need to a separate operator within the aggregation pipeline to first unnest any nested fields. As MongoDB’s CTO Eliot Horowitz wrote throughout its launch, “we’re nonetheless involved that $lookup may be misused to deal with MongoDB like a relational database.” On the finish of the day, MongoDB remains to be a document-based NoSQL database, and isn’t optimized for relational knowledge at scale.

Velocity Up Queries and Carry out Quick JOINs utilizing Exterior Indexes

If you happen to’ve tried all the interior optimizations you’ll be able to consider inside MongoDB and your queries are nonetheless too gradual, it might be time for an exterior index. Utilizing an exterior index, your knowledge may be indexes and queried from a completely separate database with a totally completely different set of strengths and limitations. Exterior indexes are tremendously useful for not solely reducing load in your main OLTP databases, but in addition to carry out sure complicated queries that aren’t excellent on a NoSQL database like MongoDB (equivalent to aggregation pipelines utilizing $lookup and $unwind operators), however could also be excellent when executed within the chosen exterior index.

Exceed Efficiency Limitations utilizing Rockset as an Exterior Index

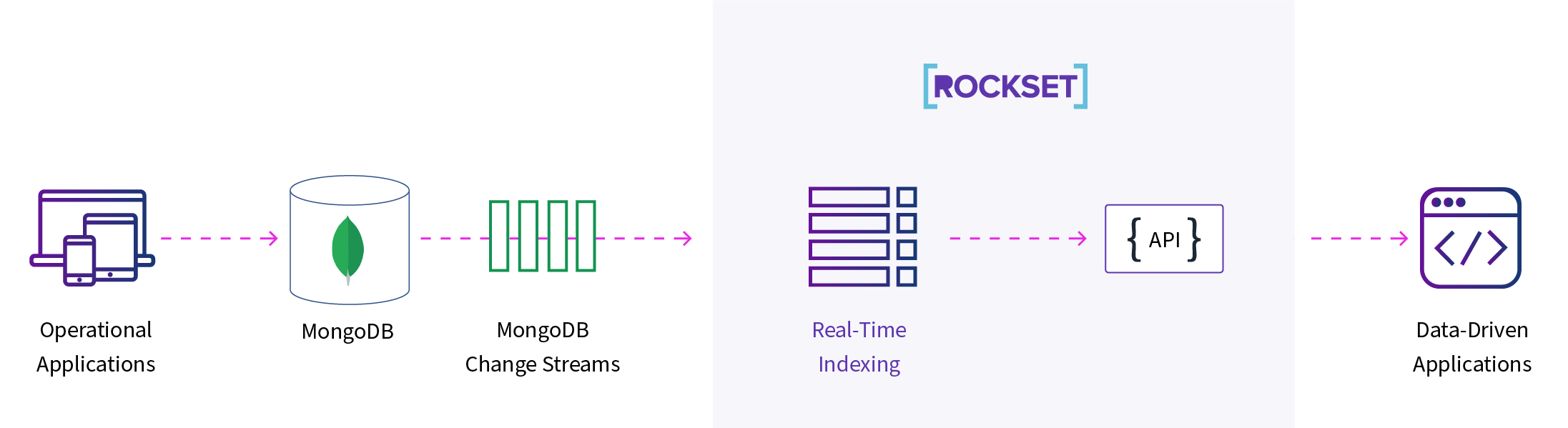

Right here at Rockset, we’ve partnered with MongoDB and constructed a completely managed connector with our real-time indexing know-how that lets you carry out quick JOINs and aggregations at scale. Rockset is a real-time serverless database which can be utilized as a velocity layer on prime of MongoDB Atlas, permitting you to carry out SQL aggregations and JOINs in real-time.

Utilizing our MongoDB integration, you will get arrange in minutes – merely click on and join Rockset together with your MongoDB collections by enabling correct learn permissions, and the remaining is mechanically completed for you. Rockset will then sync your knowledge into our real-time database utilizing our schemaless ingest know-how, after which mechanically create indexes for you on each single discipline in your assortment, together with nested fields. Moreover, Rockset may also mechanically keep up-to-date together with your MongoDB collections by syncing inside seconds anytime you replace your knowledge.

As soon as your knowledge is in Rockset, you should have entry to Rockset’s Converged Index™ know-how and question optimizer. Because of this Rockset permits full SQL help together with quick search, aggregations, and JOIN queries at scale. Rockset is purpose-built for complicated aggregations and JOINs on nested knowledge, with no restrictions on protecting indexes. Moreover, additionally, you will get quicker queries utilizing Rockset’s disaggregated Aggregator-Leaf-Tailer Structure enabling real-time efficiency for each ingesting and querying.

Allow Full SQL Assist for Aggregations and JOINs on MongoDB

Let’s re-examine our instance earlier the place we used the $lookup aggregation pipeline operator in MongoDB to simulate a SQL LEFT OUTER JOIN. We used this command to carry out the be part of:

db.workers.mixture([{

$lookup: {

from: "companies",

localField: "company",

foreignField: "name",

as: "employer"

}

}])

With full SQL help in Rockset, you’ll be able to merely use your acquainted SQL syntax to carry out the identical be part of:

SELECT

e.e mail,

e.identify,

e.firm AS employer,

e.inventory,

e.location

FROM

workers e

LEFT JOIN

corporations c

ON e.firm = c.identify;

Let’s have a look at one other instance aggregation in MongoDB the place we GROUP by two fields, COUNT the whole variety of related rows, after which SORT the outcomes:

db.rocks.mixture([{

"$group": {

_id: {

color: "$color",

type: "$type"

},

count: { $sum: 1 }

}}, {

$sort: { "_id.type": 1 }

}])

The identical command may be carried out in Rockset utilizing the next SQL syntax:

SELECT

shade,

kind,

COUNT(*)

FROM

rocks

GROUP BY

shade,

kind

ORDER BY

kind;

Getting Began with Rockset on MongoDB

Lower load in your main MongoDB occasion by offloading costly operations to Rockset, whereas additionally enabling considerably quicker queries. On prime of this, you’ll be able to even combine Rockset with knowledge sources exterior of MongoDB (together with knowledge lakes like S3/GCS and knowledge streams like Kafka/Kinesis) to hitch your knowledge collectively from a number of exterior sources and question them without delay.

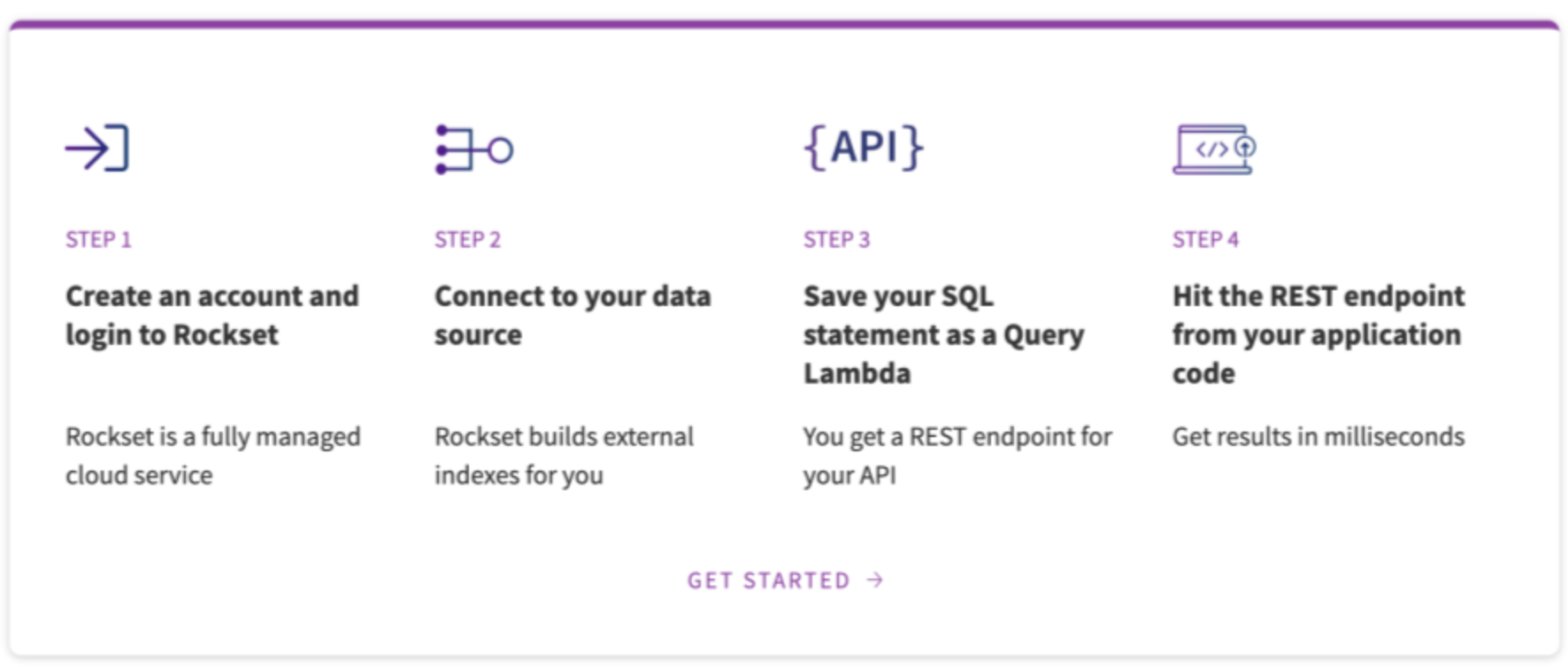

If you happen to’re serious about studying extra, you should definitely take a look at our full MongoDB.stay session the place we go into precisely how Rockset constantly indexes your knowledge from MongoDB. You may also view our tech discuss on Scaling MongoDB to listen to about extra methods for sustaining efficiency at scale. And everytime you’re able to strive it out your self, watch our step-by-step walkthrough after which create your Rockset account!

[ad_2]