[ad_1]

Flex Consumption delivers quick and enormous scale-out options on a serverless mannequin and helps lengthy operate execution instances, personal networking, occasion dimension choice, and concurrency management.

GitHub is the house of the world’s software program builders, with greater than 100 million builders and 420 million whole repositories throughout the platform. To maintain all the pieces working easily and securely, GitHub collects an amazing quantity of information by means of an in-house pipeline made up of a number of elements. However though it was constructed for fault tolerance and scalability, the continuing progress of GitHub led the corporate to reevaluate the pipeline to make sure it meets each present and future calls for.

“We had a scalability downside, at the moment, we accumulate about 700 terabytes a day of information, which is closely used for detecting malicious habits in opposition to our infrastructure and for troubleshooting. This inside system was limiting our progress.”

—Stephan Miehe, GitHub Senior Director of Platform Safety

GitHub labored with its father or mother firm, Microsoft, to discover a resolution. To course of the occasion stream at scale, the GitHub group constructed a operate app that runs in Azure Features Flex Consumption, a plan not too long ago launched for public preview. Flex Consumption delivers quick and enormous scale-out options on a serverless mannequin and helps lengthy operate execution instances, personal networking, occasion dimension choice, and concurrency management.

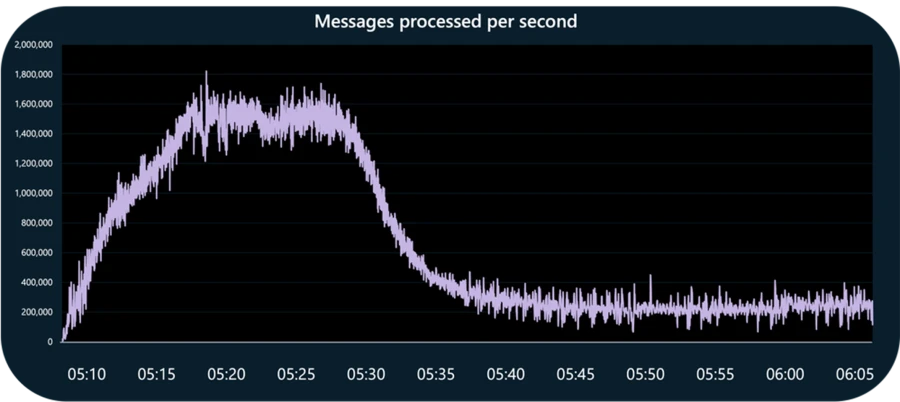

In a latest take a look at, GitHub sustained 1.6 million occasions per second utilizing one Flex Consumption app triggered from a network-restricted occasion hub.

“What actually issues to us is that the app scales up and down based mostly on demand. Azure Features Flex Consumption could be very interesting to us due to the way it dynamically scales based mostly on the variety of messages which can be queued up in Azure Occasion Hubs.”

—Stephan Miehe, GitHub Senior Director of Platform Safety

A glance again

GitHub’s downside lay in an inside messaging app orchestrating the move between the telemetry producers and shoppers. The app was initially deployed utilizing Java-based binaries and Azure Occasion Hubs. However because it started dealing with as much as 460 gigabytes (GB) of occasions per day, the app was reaching its design limits, and its availability started to degrade.

For finest efficiency, every shopper of the previous platform required its personal surroundings and time-consuming handbook tuning. As well as, the Java codebase was susceptible to breakage and laborious to troubleshoot, and people environments have been getting costly to take care of because the compute overhead grew.

“We couldn’t settle for the chance and scalability challenges of the present resolution,“ Miehe says. He and his group started to weigh the alternate options. “We have been already utilizing Azure Occasion Hubs, so it made sense to discover different Azure companies. Given the straightforward nature of our want—HTTP POST request—we wished one thing serverless that carries minimal overhead.”

Acquainted with serverless code growth, the group centered on related Azure-native options and arrived at Azure Features.

“Each platforms are well-known for being good for easy information crunching at giant scale, however we don’t wish to migrate to a different product in six months as a result of we’ve reached a ceiling.”

—Stephan Miehe, GitHub Senior Director of Platform Safety

A operate app can mechanically scale the queue based mostly on the quantity of logging site visitors. The query was how a lot it may scale. On the time GitHub started working with the Azure Features group, the Flex Consumption plan had simply entered personal preview. Based mostly on a brand new underlying structure, Flex Consumption helps as much as 1,000 partitions and supplies a sooner target-based scaling expertise. The product group constructed a proof of idea that scaled to greater than double the legacy platform’s largest subject on the time, displaying that Flex Consumption may deal with the pipeline.

“Azure Features Flex Consumption provides us a serverless resolution with 100% of the capability we’d like now, plus all of the headroom we’d like as we develop.”

—Stephan Miehe, GitHub Senior Director of Platform Safety

Making a very good resolution nice

GitHub joined the personal preview and labored intently with the Azure Features product group to see what else Flex Consumption may do. The brand new operate app is written in Python to devour occasions from Occasion Hubs. It consolidates giant batches of messages into one giant message and sends it on to the shoppers for processing.

Discovering the best quantity for every batch took some experimentation, as each operate execution has not less than a small share of overhead. At peak utilization instances, the platform will course of greater than 1 million occasions per second. Realizing this, the GitHub group wanted to seek out the candy spot in operate execution. Too excessive a quantity and there’s not sufficient reminiscence to course of the batch. Too small a quantity and it takes too many executions to course of the batch and slows efficiency.

The proper quantity proved to be 5,000 messages per batch. “Our execution instances are already extremely low—within the 100–200 millisecond vary,” Miehe experiences.

This resolution has built-in flexibility. The group can differ the variety of messages per batch for various use circumstances and may belief that the target-based scaling capabilities will scale out to the best variety of cases. On this scaling mannequin, Azure Features determines the variety of unprocessed messages on the occasion hub after which instantly scales to an applicable occasion rely based mostly on the batch dimension and partition rely. On the higher certain, the operate app scales as much as one occasion per occasion hub partition, which may work out to be 1,000 cases for very giant occasion hub deployments.

“If different clients wish to do one thing related and set off a operate app from Occasion Hubs, they have to be very deliberate within the variety of partitions to make use of based mostly on the scale of their workload, if you happen to don’t have sufficient, you’ll constrain consumption.”

—Stephan Miehe, GitHub Senior Director of Platform Safety

Azure Features helps a number of occasion sources along with Occasion Hubs, together with Apache Kafka, Azure Cosmos DB, Azure Service Bus queues and subjects, and Azure Queue Storage.

Reaching behind the digital community

The operate as a service mannequin frees builders from the overhead of managing many infrastructure-related duties. However even serverless code may be constrained by the constraints of the networks the place it runs. Flex Consumption addresses the difficulty with improved digital community (VNet) help. Operate apps may be secured behind a VNet and may attain different companies secured behind a VNet—with out degrading efficiency.

As an early adopter of Flex Consumption, GitHub benefited from enhancements being made behind the scenes to the Azure Features platform. Flex Consumption runs on Legion, a newly architected, inside platform as a service (PaaS) spine that improves community capabilities and efficiency for high-demand situations. For instance, Legion is able to injecting compute into an present VNet in milliseconds—when a operate app scales up, every new compute occasion that’s allotted begins up and is prepared for execution, together with outbound VNet connectivity, inside 624 milliseconds (ms) on the 50 percentile and 1,022 ms on the 90 percentile. That’s how GitHub’s messaging processing app can attain Occasion Hubs secured behind a digital community with out incurring vital delays. Previously 18 months, the Azure Features platform has diminished chilly begin latency by roughly 53% throughout all areas and for all supported languages and platforms.

Working by means of challenges

This undertaking pushed the boundaries for each the GitHub and Azure Features engineering groups. Collectively, they labored by means of a number of challenges to realize this degree of throughput:

- Within the first take a look at run, GitHub had so many messages pending for processing that it induced an integer overflow within the Azure Features scaling logic, which was instantly mounted.

- Within the second run, throughput was severely restricted as a result of an absence of connection pooling. The group rewrote the operate code to accurately reuse connections from one execution to the following.

- At about 800,000 occasions per second, the system gave the impression to be throttled on the community degree, however the trigger was unclear. After weeks of investigation, the Azure Features group discovered a bug within the obtain buffer configuration within the Azure SDK Superior Message Queuing Protocol (AMQP) transport implementation. This was promptly mounted by the Azure SDK group and allowed GitHub to push past 1 million occasions per second.

Finest practices in assembly a throughput milestone

With extra energy comes extra duty, and Miehe acknowledges that Flex Consumption gave his group “lots of knobs to show,” as he put it. “There’s a steadiness between flexibility and the trouble it’s a must to put in to set it up proper.”

To that finish, he recommends testing early and infrequently, a well-recognized a part of the GitHub pull request tradition. The next finest practices helped GitHub meet its milestones:

- Batch it if you happen to can: Receiving messages in batches boosts efficiency. Processing hundreds of occasion hub messages in a single operate execution considerably improves the system throughput.

- Experiment with batch dimension: Miehe’s group examined batches as giant as 100,000 occasions and as small as 100 earlier than touchdown on 5,000 because the max batch dimension for quickest execution.

- Automate your pipelines: GitHub makes use of Terraform to construct the operate app and the Occasion Hubs cases. Provisioning each elements collectively reduces the quantity of handbook intervention wanted to handle the ingestion pipeline. Plus, Miehe’s group may iterate extremely rapidly in response to suggestions from the product group.

The GitHub group continues to run the brand new platform in parallel with the legacy resolution whereas it screens efficiency and determines a cutover date.

“We’ve been working them aspect by aspect intentionally to seek out the place the ceiling is,” Miehe explains.

The group was delighted. As Miehe says, “We’re happy with the outcomes and can quickly be sunsetting all of the operational overhead of the previous resolution.“

Discover options with Azure Features

[ad_2]