[ad_1]

Inception of LLMs – NLP and Neural Networks

The creation of Massive Language Fashions didn’t occur in a single day. Remarkably, the primary idea of language fashions began with rule-based methods dubbed Pure Language Processing. These methods observe predefined guidelines that make selections and infer conclusions based mostly on textual content enter. These methods depend on if-else statements processing key phrase info and producing predetermined outputs. Consider a call tree the place output is a predetermined response if the enter comprises X, Y, Z, or none. For instance: If the enter consists of key phrases “mom,” output “How is your mom?” Else, output, “Are you able to elaborate on that?”

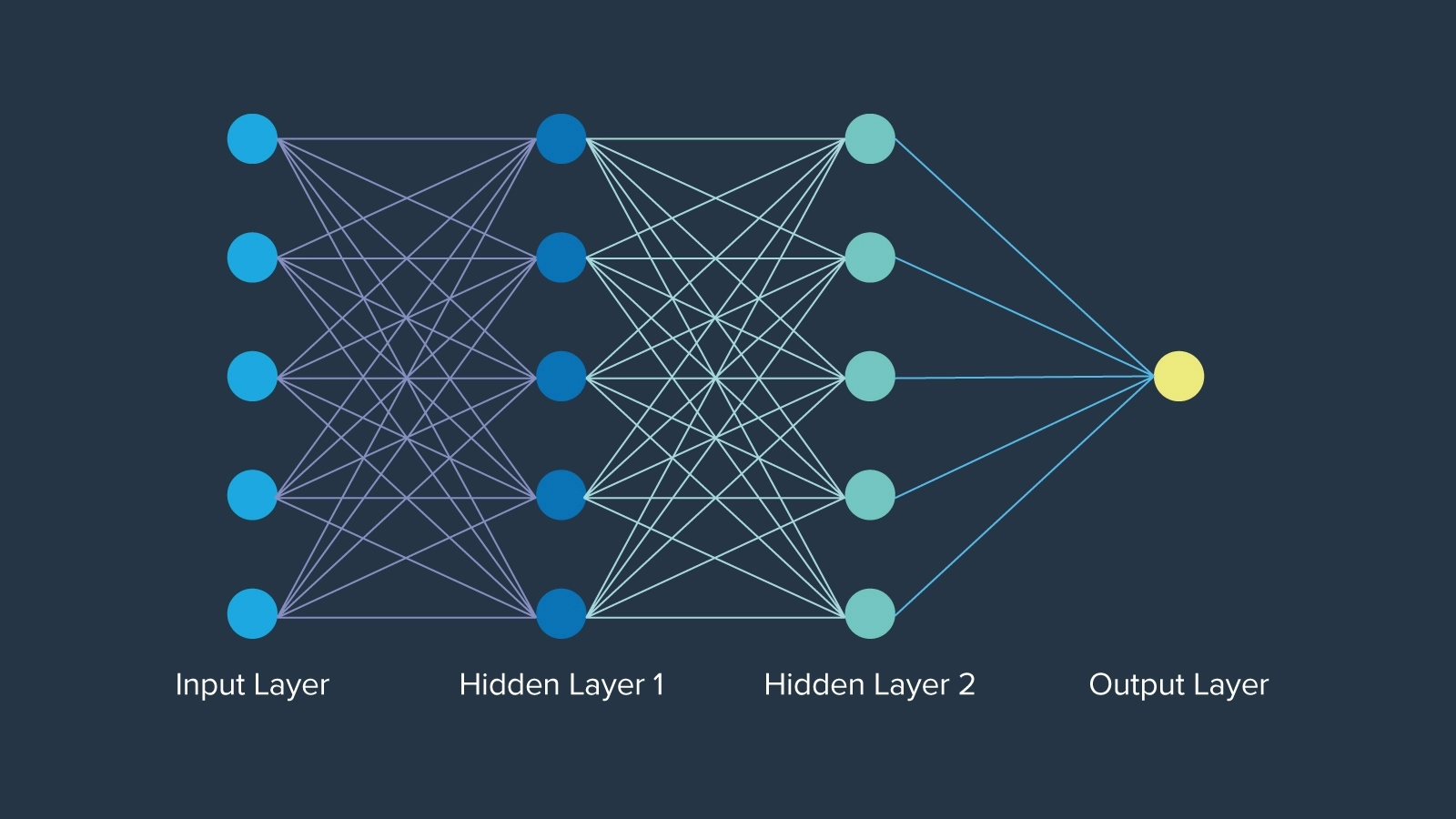

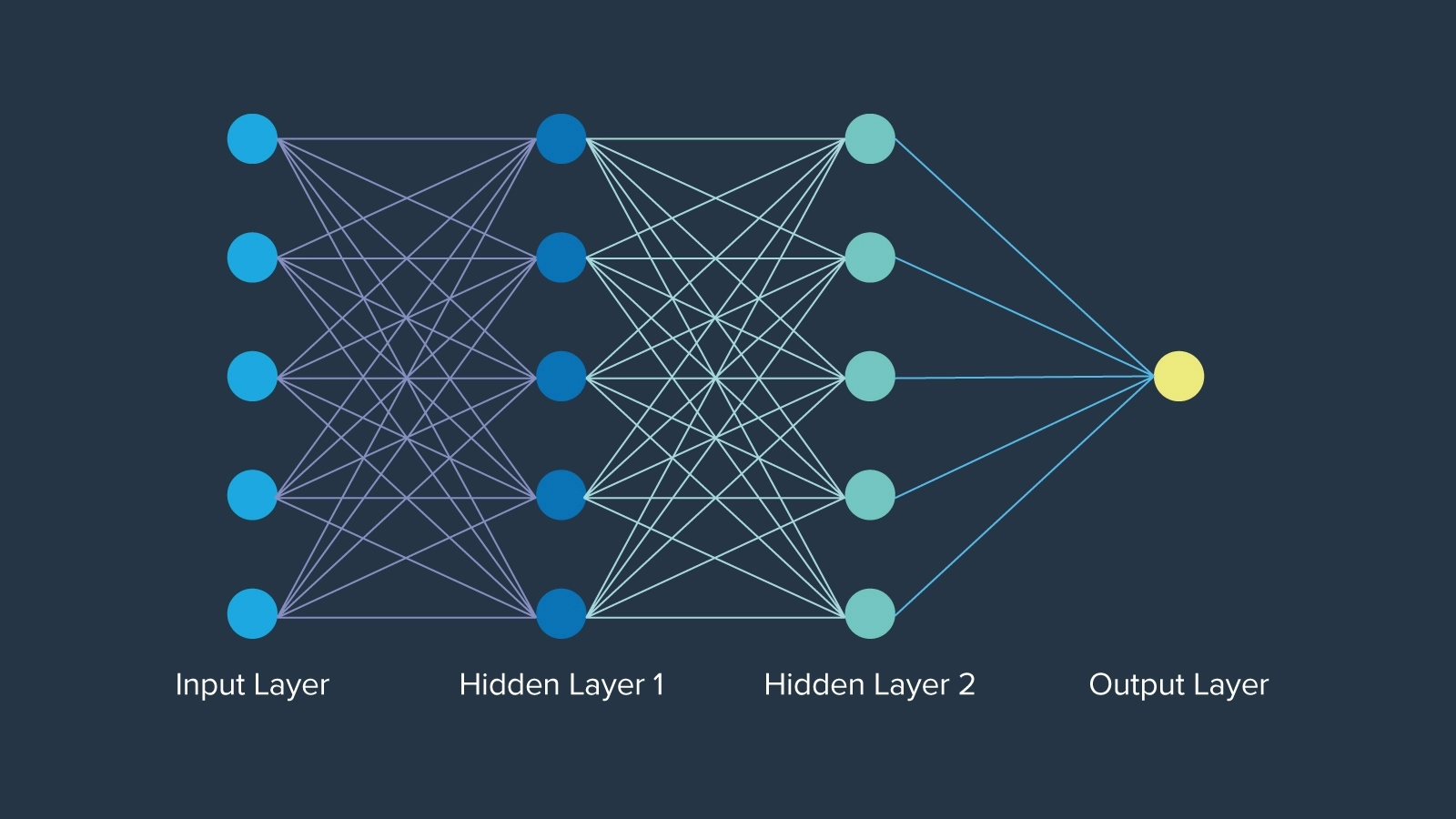

The largest early development was neural networks, which had been thought-about when first launched in 1943 impressed by neurons in human mind operate, by mathematician Warren McCulloch. Neural networks even pre-date the time period “synthetic intelligence” by roughly 12 years. The community of neurons in every layer is organized in a particular method, the place every node holds a weight that determines its significance within the community. Finally, neural networks opened closed doorways creating the muse on which AI will without end be constructed.

Evolution of LLMs – Embeddings, LSTM, Consideration & Transformers

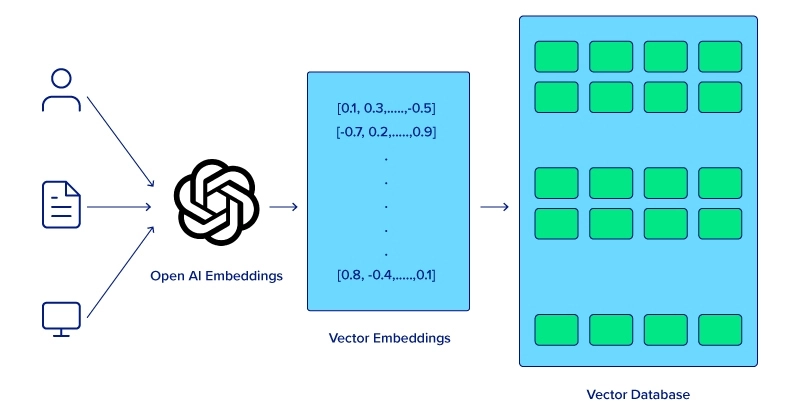

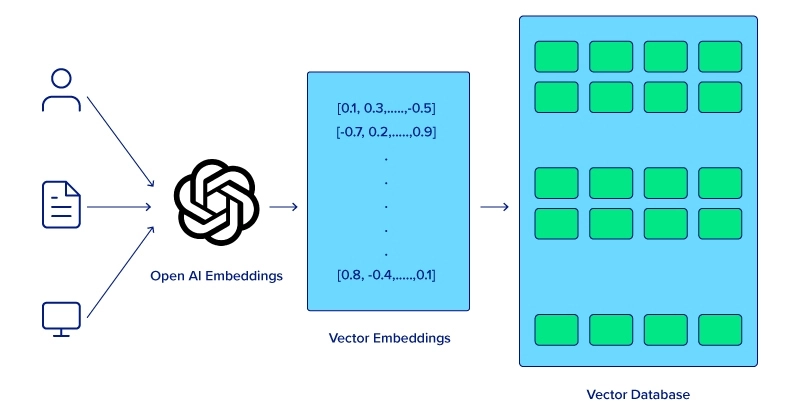

Computer systems can’t comprehend the meanings of phrases working collectively in a sentence the identical means people can. To enhance laptop comprehension for semantic evaluation, a phrase embedding approach should first be utilized which permits fashions to seize the relationships between neighboring phrases resulting in improved efficiency in varied NLP duties. Nonetheless, there must be a technique to retailer phrase embedding in reminiscence.

Lengthy Brief-Time period Reminiscence (LSTM) and Gated Recurrent Items (GRUs) had been nice leaps inside neural networks, with the aptitude of dealing with sequential information extra successfully than conventional neural networks. Whereas LSTMs are not used, these fashions paved the best way for extra complicated language understanding and technology duties that finally led to the transformer mannequin.

The Fashionable LLM – Consideration, Transformers, and LLM Variants

The introduction of the eye mechanism was a game-changer, enabling fashions to give attention to completely different components of an enter sequence when making predictions. Transformer fashions, launched with the seminal paper “Consideration is All You Want” in 2017, leveraged the eye mechanism to course of complete sequences concurrently, vastly bettering each effectivity and efficiency. The eight Google Scientists didn’t understand the ripples their paper would make in creating present-day AI.

Following the paper, Google’s BERT (2018) was developed and touted because the baseline for all NLP duties, serving as an open-source mannequin utilized in quite a few initiatives that allowed the AI neighborhood to construct initiatives and develop. Its knack for contextual understanding, pre-trained nature and possibility for fine-tuning, and demonstration of transformer fashions set the stage for bigger fashions.

Alongside BERT, OpenAI launched GPT-1 the primary iteration of their transformer mannequin. GPT-1 (2018), began with 117 million parameters, adopted by GPT-2 (2019) with an enormous leap to 1.5 billion parameters, with development persevering with with GPT-3 (2020), boasting 175 billion parameters. OpenAI’s groundbreaking chatbot ChatGPT, based mostly on GPT-3, was launched two years afterward Nov. 30, 2022, marking a big craze and really democratizing entry to highly effective AI fashions. Be taught in regards to the distinction between BERT and GPT-3.

What Technological Developments are Driving the Way forward for LLMs?

Advances in {hardware}, enhancements in algorithms and methodologies, and integration of multi-modality all contribute to the development of huge language fashions. Because the business finds new methods to make the most of LLMs successfully, the continued development will tailor itself to every software and finally totally change the panorama of computing.

Advances in {Hardware}

The most straightforward and direct methodology for bettering LLMs is to enhance the precise {hardware} that the mannequin runs on. The event of specialised {hardware} like Graphics Processing Items (GPUs) considerably accelerated the coaching and inference of huge language fashions. GPUs, with their parallel processing capabilities, have turn into important for dealing with the huge quantities of information and complicated computations required by LLMs.

OpenAI makes use of NVIDIA GPUs to energy its GPT fashions and was one of many first NVIDIA DGX prospects. Their relationship spanned from the emergence of AI to the continuance of AI the place the CEO hand-delivered the primary NVIDIA DGX-1 but in addition the newest NVIDIA DGX H200. These GPUs incorporate enormous quantities of reminiscence and parallel computing for coaching, deploying, and inference efficiency.

Enhancements in Algorithms and Architectures

The transformer structure is thought for already aiding LLMs. The introduction of that structure has been pivotal to the development of LLMs as they’re now. Its potential to course of complete sequences concurrently relatively than sequentially has dramatically improved mannequin effectivity and efficiency.

Having mentioned that, extra can nonetheless be anticipated of the transformer structure, and the way it can proceed evolving Massive Language Fashions.

- Steady refinements to the transformer mannequin, together with higher consideration mechanisms and optimization methods, will result in extra correct and quicker fashions.

- Analysis into novel architectures, similar to sparse transformers and environment friendly consideration mechanisms, goals to scale back computational necessities whereas sustaining or enhancing efficiency.

Integration of Multimodal Inputs

The way forward for LLMs lies of their potential to deal with multimodal inputs, integrating textual content, photographs, audio, and doubtlessly different information varieties to create richer and extra contextually conscious fashions. Multimodal fashions like OpenAI’s CLIP and DALL-E have demonstrated the potential of mixing visible and textual info, enabling purposes in picture technology, captioning, and extra.

These integrations permit LLMs to carry out much more complicated duties, similar to comprehending context from each textual content and visible cues, which in the end makes them extra versatile and highly effective.

Way forward for LLMs

The developments haven’t stopped, and there are extra coming as LLM creators plan to include much more modern methods and methods of their work. Not each enchancment in LLMs requires extra demanding computation or deeper conceptual understanding. One key enhancement is creating smaller, extra user-friendly fashions.

Whereas these fashions might not match the effectiveness of “Mammoth LLMs” like GPT-4 and LLaMA 3, it is vital to do not forget that not all duties require large and complicated computations. Regardless of their measurement, superior smaller fashions like Mixtral 8x7B and Mistal 7B can nonetheless ship spectacular performances. Listed here are some key areas and applied sciences anticipated to drive the event and enchancment of LLMs:

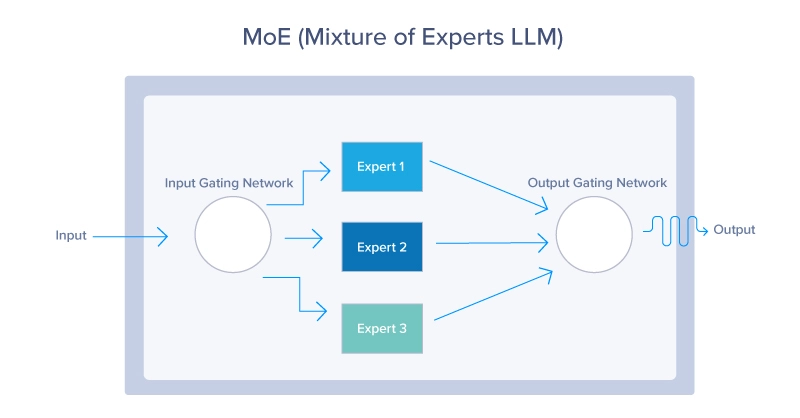

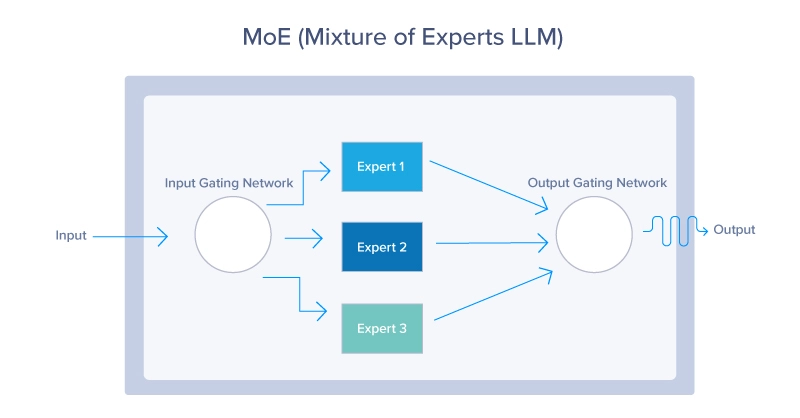

1. Combination of Consultants (MoE)

MoE fashions use a dynamic routing mechanism to activate solely a subset of the mannequin’s parameters for every enter. This strategy permits the mannequin to scale effectively, activating essentially the most related “specialists” based mostly on the enter context, as seen beneath. MoE fashions supply a method to scale up LLMs and not using a proportional improve in computational value. By leveraging solely a small portion of your complete mannequin at any given time, these fashions can use much less assets whereas nonetheless offering glorious efficiency.

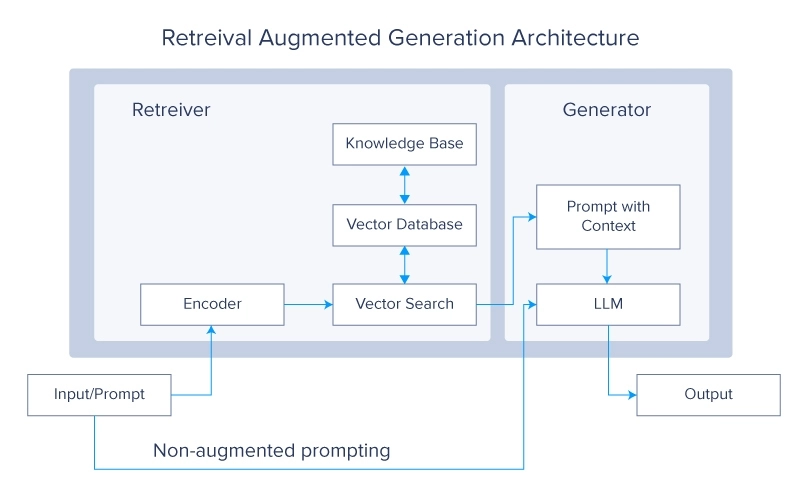

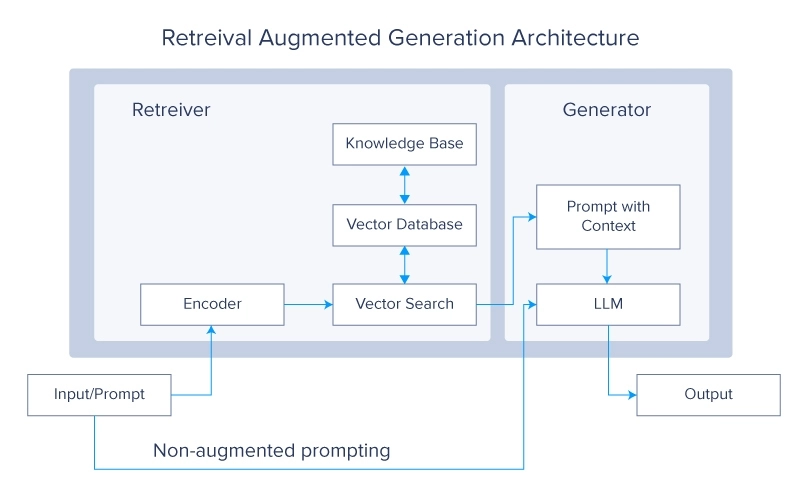

2. Retrieval-Augmented Era (RAG) Programs

Retrieval Augmented Era methods are at the moment a extremely popular matter within the LLM neighborhood. The idea questions why you need to prepare the LLMs on extra information when you possibly can merely make it retrieve the specified information from an exterior supply. Then that information is used to generate a last reply.

RAG methods improve LLMs by retrieving related info from massive exterior databases in the course of the technology course of. This integration permits the mannequin to entry and incorporate up-to-date and domain-specific information, bettering its accuracy and relevance. Combining the generative capabilities of LLMs with the precision of retrieval methods leads to a robust hybrid mannequin that may generate high-quality responses whereas staying knowledgeable by exterior information sources.

3. Meta-Studying

Meta-learning approaches permit LLMs to discover ways to be taught, enabling them to adapt rapidly to new duties and domains with minimal coaching.

The idea of Meta-learning will depend on a number of key ideas similar to:

- Few-Shot Studying: by which LLMs are educated to know and carry out new duties with only some examples, considerably lowering the quantity of information required for efficient studying. This makes them extremely versatile and environment friendly in dealing with various eventualities.

- Self-Supervised Studying: LLMs use massive quantities of unlabelled information to generate labels and be taught representations. This type of studying permits fashions to create a wealthy understanding of language construction and semantics which is then fine-tuned for particular purposes.

- Reinforcement Studying: On this strategy, LLMs be taught by interacting with their atmosphere and receiving suggestions within the type of rewards or penalties. This helps fashions to optimize their actions and enhance decision-making processes over time.

Conclusion

LLMs are marvels of contemporary expertise. They’re complicated of their functioning, large in measurement, and groundbreaking of their developments. On this article, we explored the longer term potential of those extraordinary developments. Ranging from their early beginnings on the planet of synthetic intelligence, we additionally delved into key improvements like Neural Networks and Consideration Mechanisms.

We then examined a mess of methods for enhancing these fashions, together with developments in {hardware}, refinements of their inner mechanisms, and the event of recent architectures. By now, we hope you’ve got gained a clearer and extra complete understanding of LLMs and their promising trajectory within the close to future.

Kevin Vu manages Exxact Corp weblog and works with a lot of its proficient authors who write about completely different features of Deep Studying.

[ad_2]