[ad_1]

Kubernetes at Rockset

At Rockset, we use Kubernetes (k8s) for cluster orchestration. It runs all our manufacturing microservices — from our ingest staff to our query-serving tier. Along with internet hosting all of the manufacturing infrastructure, every engineer has their very own Kubernetes namespace and devoted sources that we use to domestically deploy and check new variations of code and configuration. This sandboxed surroundings for improvement permits us to make software program releases confidently a number of occasions each week. On this weblog publish, we’ll discover a device we constructed internally that offers us visibility into Kubernetes occasions, a superb supply of details about the state of the system, which we discover helpful in troubleshooting the system and understanding its long-term well being.

Why We Care About Kubernetes Occasions

Kubernetes emits occasions each time some change happens in any of the sources that it’s managing. These occasions usually comprise essential metadata in regards to the entity that triggered it, the kind of occasion (Regular, Warning, Error, and so forth.) and the trigger. This information is often saved in etcd and made out there whenever you run sure kubectl instructions.

$ kubectl describe pods jobworker-c5dc75db8-7m5ln

...

...

...

Occasions:

Kind Motive Age From Message

---- ------ ---- ---- -------

Regular Scheduled 7m default-scheduler Efficiently assigned grasp/jobworker-c5dc75db8-7m5ln to ip-10-202-41-139.us-west-2.compute.inner

Regular Pulling 6m kubelet, ip-XXX-XXX-XXX-XXX.us-west-2.compute.inner pulling picture "..."

Regular Pulled 6m kubelet, ip-XXX-XXX-XXX-XXX.us-west-2.compute.inner Efficiently pulled picture "..."

Regular Created 6m kubelet, ip-XXX-XXX-XXX-XXX.us-west-2.compute.inner Created container

Regular Began 6m kubelet, ip-XXX-XXX-XXX-XXX.us-west-2.compute.inner Began container

Warning Unhealthy 2m (x2 over 2m) kubelet, ip-XXX-XXX-XXX-XXX.us-west-2.compute.inner Readiness probe failed: Get http://XXX.XXX.XXX.XXX:YYY/healthz: dial tcp join: connection refused

These occasions assist perceive what occurred behind the scenes when a specific entity entered a particular state. One other place to see an aggregated record of all occasions is by accessing all occasions by way of kubectl get occasions.

$ kubectl get occasions

LAST SEEN TYPE REASON KIND MESSAGE

5m Regular Scheduled Pod Efficiently assigned grasp/jobworker-c5dc75db8-7m5ln to ip-XXX-XXX-XXX-XXX.us-west-2.compute.inner

5m Regular Pulling Pod pulling picture "..."

4m Regular Pulled Pod Efficiently pulled picture "..."

...

...

...

As might be seen above, this provides us particulars – the entity that emitted the occasion, the kind/severity of the occasion, in addition to what triggered it. This info may be very helpful when trying to perceive adjustments which are occurring within the system. One further use of those occasions is to know long-term system efficiency and reliability. For instance, sure node and networking errors that trigger pods to restart might not trigger service disruptions in a extremely out there setup however usually might be hiding underlying circumstances that place the system at elevated danger.

In a default Kubernetes setup, the occasions are endured into etcd, a key-value retailer. etcd is optimized for fast strongly constant lookups, however falls brief on its capability to supply analytical talents over the information. As measurement grows, etcd additionally has hassle maintaining and subsequently, occasions get compacted and cleaned up periodically. By default, solely the previous hour of occasions is preserved by etcd.

The historic context can be utilized to know long-term cluster well being, incidents that occurred prior to now and the actions taken to mitigate them inside Kubernetes, and construct correct publish mortems. Although we checked out different monitoring instruments for occasions, we realized that we had a possibility to make use of our personal product to research these occasions in a manner that no different monitoring product might, and use it to assemble a visualization of the states of all of our Kubernetes sources.

Overview

To ingest the Kubernetes occasions, we use an open supply device by Heptio referred to as eventrouter. It reads occasions from the Kubernetes API server and forwards them to a specified sink. The sink might be something from Amazon S3 to an arbitrary HTTP endpoint. So as to connect with a Rockset assortment, we determined to construct a Rockset connector for eventrouter to manage the format of the information uploaded to our assortment. We contributed this Rockset sink into the upstream eventrouter undertaking. This connector is de facto easy — it takes all obtained occasions and emits them into Rockset. The actually cool half is that for ingesting these occasions, that are JSON payloads that modify throughout several types of entities, we don’t have to construct any schema or do structural transformations. We are able to emit the JSON occasion as-is right into a Rockset assortment and question it as if it had been a full SQL desk. Rockset routinely converts JSON occasions into SQL tables by first indexing all of the json fields utilizing Converged Indexing after which routinely schematizing them by way of Sensible Schemas.

The front-end software is a skinny layer over the SQL layer that enables filtering occasions by namespace and entity kind (Pod, Deployment, and so forth.), after which inside these entity varieties, cluster occasions by regular/errors. The aim is to have a histogram of those occasions to visually examine and perceive the state of the cluster over an prolonged time period. After all, what we show is solely a subset of what could possibly be constructed – one can think about way more complicated analyses – like analyzing community stability, deployment processes, canarying software program releases and even utilizing the occasion retailer as a key diagnostic device to find correlations between cluster-level alerts and Kubernetes-level adjustments.

Setup

Earlier than we are able to start receiving occasions from eventrouter into Rockset, we should create a set in Rockset. That is the gathering that each one eventrouter occasions are saved in. You are able to do this with a free account from https://console.rockset.com/create.

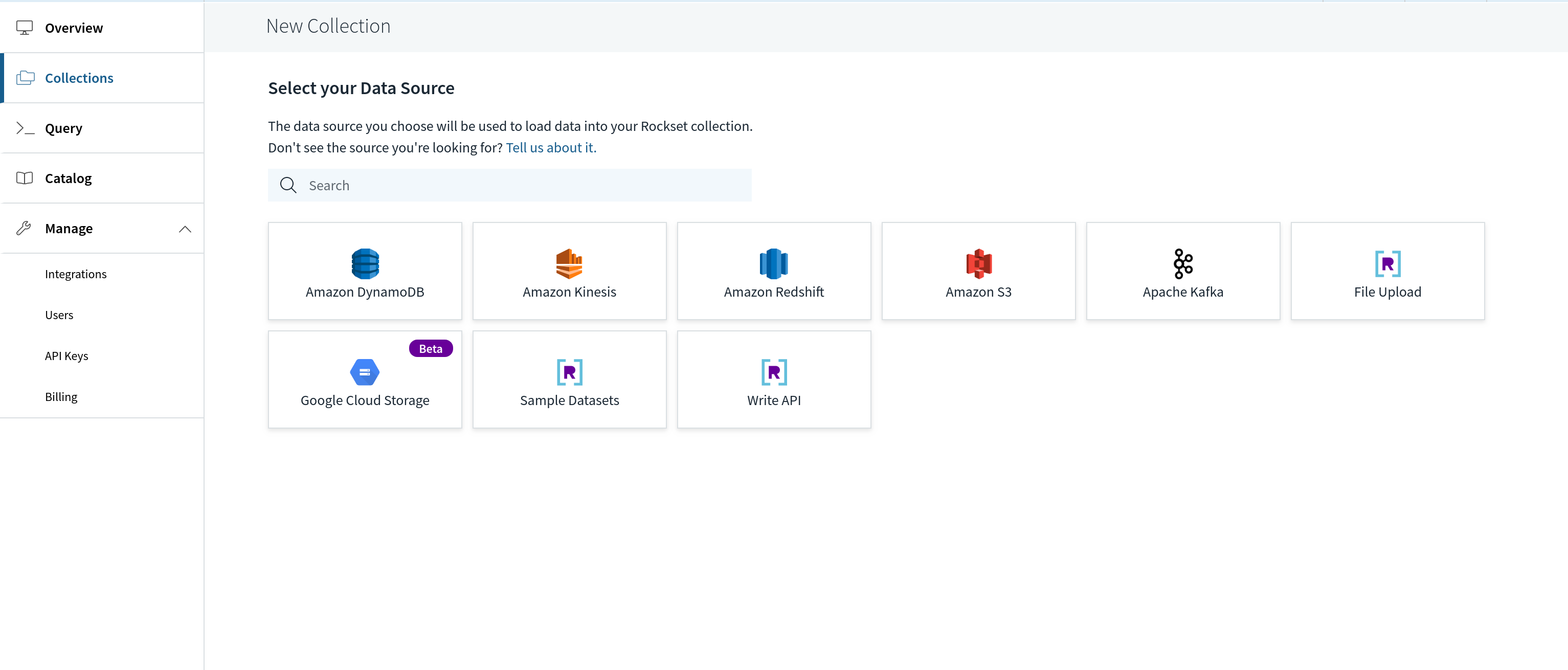

A set in Rockset can ingest information from a specified supply, or might be despatched occasions by way of the REST API. We’ll use the latter, so, we create a set that’s backed by this Write API. Within the Rockset console, we are able to create such a set by choosing “Write API” as the information supply.

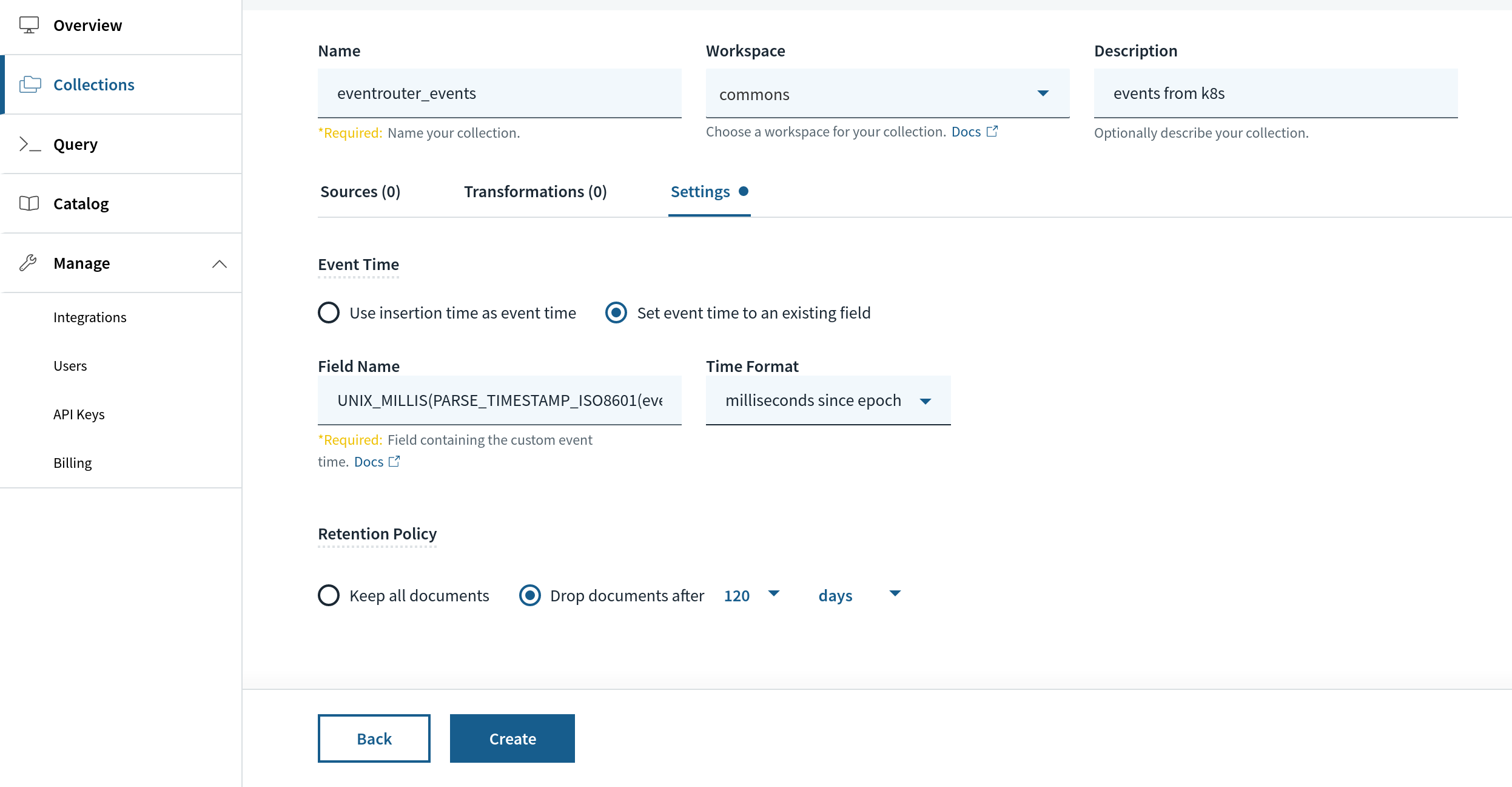

When creating the gathering, we are able to choose a retention, say, 120 days or any affordable period of time to offer us some sense of cluster well being. This retention is utilized primarily based on a particular area in Rockset, _event_time. We’ll map this area to a particular area throughout the JSON occasion payload we’ll obtain from eventrouter referred to as occasion.lastTimestamp. The transformation perform appears like the next:

UNIX_MILLIS(PARSE_TIMESTAMP_ISO8601(occasion.lastTimestamp))

After creating the gathering, we are able to now arrange and use eventrouter to start receiving Kubernetes occasions.

Now, receiving occasions from eventrouter requires yet another factor – a Rockset API key. We are able to use API keys in Rockset to jot down JSON to a set, and to make queries. On this case, we create an API key referred to as eventrouter_write from Handle > API keys.

Copy the API key as we would require it in our subsequent step organising eventrouter to ship occasions into the Rockset assortment we simply arrange. You’ll be able to arrange eventrouter by cloning the eventrouter repository and edit the YAML file yaml/deployment.yaml to appear to be the next:

# eventrouter/yaml/deployment.yaml

config.json: |-

{

"sink": "rockset"

"rocksetServer": "https://api.rs2.usw2.rockset.com",

"rocksetAPIKey": "<API_KEY>",

"rocksetCollectionName": "eventrouter_events",

"rocksetWorkspaceName": "commons",

}

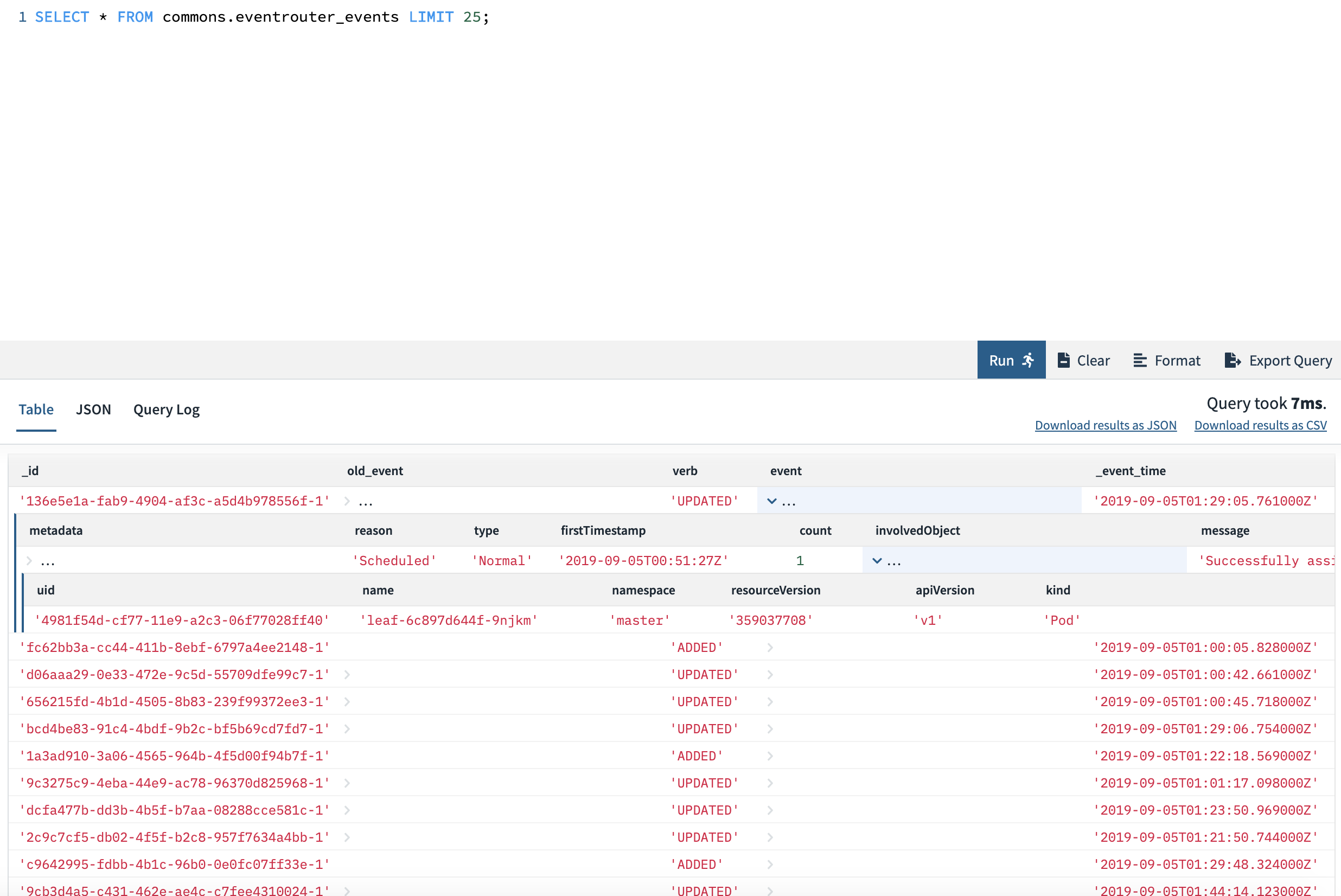

You’ll be able to substitute the <API_KEY> with the Rockset API key we simply created within the earlier step. Now, we’re prepared! Run kubectl apply -f yaml/deployment.yaml, and eventrouter can begin watching and forwarding occasions immediately. Wanting on the assortment inside Rockset, you must begin seeing occasions flowing in and being made out there as a SQL desk. We are able to question it as proven beneath from the Rockset console and get a way of among the occasions flowing in. We are able to run full SQL over it – together with all forms of filters, joins, and so forth.

Querying Information

We are able to now begin asking some attention-grabbing questions from our cluster and get an understanding of cluster well being. One query that we needed to ask was – how usually are we deploying new photos into manufacturing. We operated on a strict launch schedule, however there are occasions after we rollout and rollback photos.

With replicasets as (

choose

e.occasion.cause as cause,

e.occasion.lastTimestamp as ts,

e.occasion.metadata.title as title,

REGEXP_EXTRACT(e.occasion.message, 'Created pod: (.*)', 1) as pod

from

commons.eventrouter_events e

the place

e.occasion.involvedObject.variety = 'ReplicaSet'

and e.occasion.metadata.namespace="manufacturing"

and e.occasion.cause = 'SuccessfulCreate'

),

pods as (

choose

e.occasion.cause as cause,

e.occasion.message as message,

e.occasion.lastTimestamp as ts,

e.occasion.involvedObject.title as title,

REGEXP_EXTRACT(

e.occasion.message,

'pulling picture "imagerepo/folder/(.*?)"',

1

) as picture

from

commons.eventrouter_events e

the place

e.occasion.involvedObject.variety = 'Pod'

and e.occasion.metadata.namespace="manufacturing"

and e.occasion.message like '%pulling picture%'

and e.occasion.involvedObject.title like 'aggregator%'

)

SELECT * from (

choose

MAX(p.ts) as ts, MAX(r.pod) as pod, MAX(p.picture) as picture, r.title

from

pods p

JOIN replicasets r on p.title = r.pod

GROUP BY r.title) sq

ORDER BY ts DESC

restrict 100;

The above question offers with our deployments, which in flip create replicasets and finds the final date on which we deployed a specific picture.

+------------------------------------------+----------------------------------------+-----------------------------+----------------+

| picture | title | pod | ts |

|------------------------------------------+----------------------------------------+-----------------------------+----------------|

| leafagg:0.6.14.20190928-58cdee6dd4 | aggregator-c478b597.15c8811219b0c944 | aggregator-c478b597-z8fln | 2019-09-28T04:53:05Z |

| leafagg:0.6.14.20190928-58cdee6dd4 | aggregator-c478b597.15c881077898d3e0 | aggregator-c478b597-wvbdb | 2019-09-28T04:52:20Z |

| leafagg:0.6.14.20190928-58cdee6dd4 | aggregator-c478b597.15c880742e034671 | aggregator-c478b597-j7jjt | 2019-09-28T04:41:47Z |

| leafagg:0.6.14.20190926-a553e0af68 | aggregator-587f77c45c.15c8162d63e918ec | aggregator-587f77c45c-qjkm7 | 2019-09-26T20:14:15Z |

| leafagg:0.6.14.20190926-a553e0af68 | aggregator-587f77c45c.15c8160fefed6631 | aggregator-587f77c45c-9c47j | 2019-09-26T20:12:08Z |

| leafagg:0.6.14.20190926-a553e0af68 | aggregator-587f77c45c.15c815f341a24725 | aggregator-587f77c45c-2pg6l | 2019-09-26T20:10:05Z |

| leafagg:0.6.14.20190924-b2e6a85445 | aggregator-58d76b8459.15c77b4c1c32c387 | aggregator-58d76b8459-4gkml | 2019-09-24T20:56:02Z |

| leafagg:0.6.14.20190924-b2e6a85445 | aggregator-58d76b8459.15c77b2ee78d6d43 | aggregator-58d76b8459-jb257 | 2019-09-24T20:53:57Z |

| leafagg:0.6.14.20190924-b2e6a85445 | aggregator-58d76b8459.15c77b131e353ed6 | aggregator-58d76b8459-rgcln | 2019-09-24T20:51:58Z |

+------------------------------------------+----------------------------------------+-----------------------------+----------------+

This excerpt of photos and pods, with timestamp, tells us loads about the previous couple of deploys and after they occurred. Plotting this on a chart would inform us about how constant we’ve been with our deploys and the way wholesome our deployment practices are.

Now, shifting on to efficiency of the cluster itself, working our personal hand-rolled Kubernetes cluster means we get a whole lot of management over upgrades and the system setup however it’s price seeing when nodes might have been misplaced/community partitioned inflicting them to be marked as unready. The clustering of such occasions can inform us loads in regards to the stability of the infrastructure.

With nodes as (

choose

e.occasion.cause,

e.occasion.message,

e.occasion.lastTimestamp as ts,

e.occasion.metadata.title

from

commons.eventrouter_events e

the place

e.occasion.involvedObject.variety = 'Node'

AND e.occasion.kind="Regular"

AND e.occasion.cause = 'NodeNotReady'

ORDER by ts DESC

)

choose

*

from

nodes

Restrict 100;

This question offers us the occasions the node standing went NotReady and we are able to attempt to cluster this information utilizing SQL time capabilities to know how usually points are occurring over particular buckets of time.

+------------------------------------------------------------------------------+--------------------------------------------------------------+--------------+----------------------+

| message | title | cause | ts |

|------------------------------------------------------------------------------+--------------------------------------------------------------+--------------+----------------------|

| Node ip-xx-xxx-xx-xxx.us-xxxxxx.compute.inner standing is now: NodeNotReady | ip-xx-xxx-xx-xxx.us-xxxxxx.compute.inner.yyyyyyyyyyyyyyyy | NodeNotReady | 2019-09-30T02:13:19Z |

| Node ip-xx-xxx-xx-xxx.us-xxxxxx.compute.inner standing is now: NodeNotReady | ip-xx-xxx-xx-xxx.us-xxxxxx.compute.inner.yyyyyyyyyyyyyyyy | NodeNotReady | 2019-09-30T02:13:19Z |

| Node ip-xx-xxx-xx-xxx.us-xxxxxx.compute.inner standing is now: NodeNotReady | ip-xx-xxx-xx-xxx.us-xxxxxx.compute.inner.yyyyyyyyyyyyyyyy | NodeNotReady | 2019-09-30T02:14:20Z |

| Node ip-xx-xxx-xx-xxx.us-xxxxxx.compute.inner standing is now: NodeNotReady | ip-xx-xxx-xx-xxx.us-xxxxxx.compute.inner.yyyyyyyyyyyyyyyy | NodeNotReady | 2019-09-30T02:13:19Z |

| Node ip-xx-xxx-xx-xx.us-xxxxxx.compute.inner standing is now: NodeNotReady | ip-xx-xxx-xx-xx.us-xxxxxx.compute.inner.yyyyyyyyyyyyyyyy | NodeNotReady | 2019-09-30T00:10:11Z |

+------------------------------------------------------------------------------+--------------------------------------------------------------+--------------+----------------------+

We are able to moreover search for pod and container stage occasions like after they get OOMKilled and correlate that with different occasions occurring within the system. In comparison with a time collection database like prometheus, the facility of SQL lets us write and JOIN several types of occasions to attempt to piece collectively various things that occurred round a specific time interval, which can be causal.

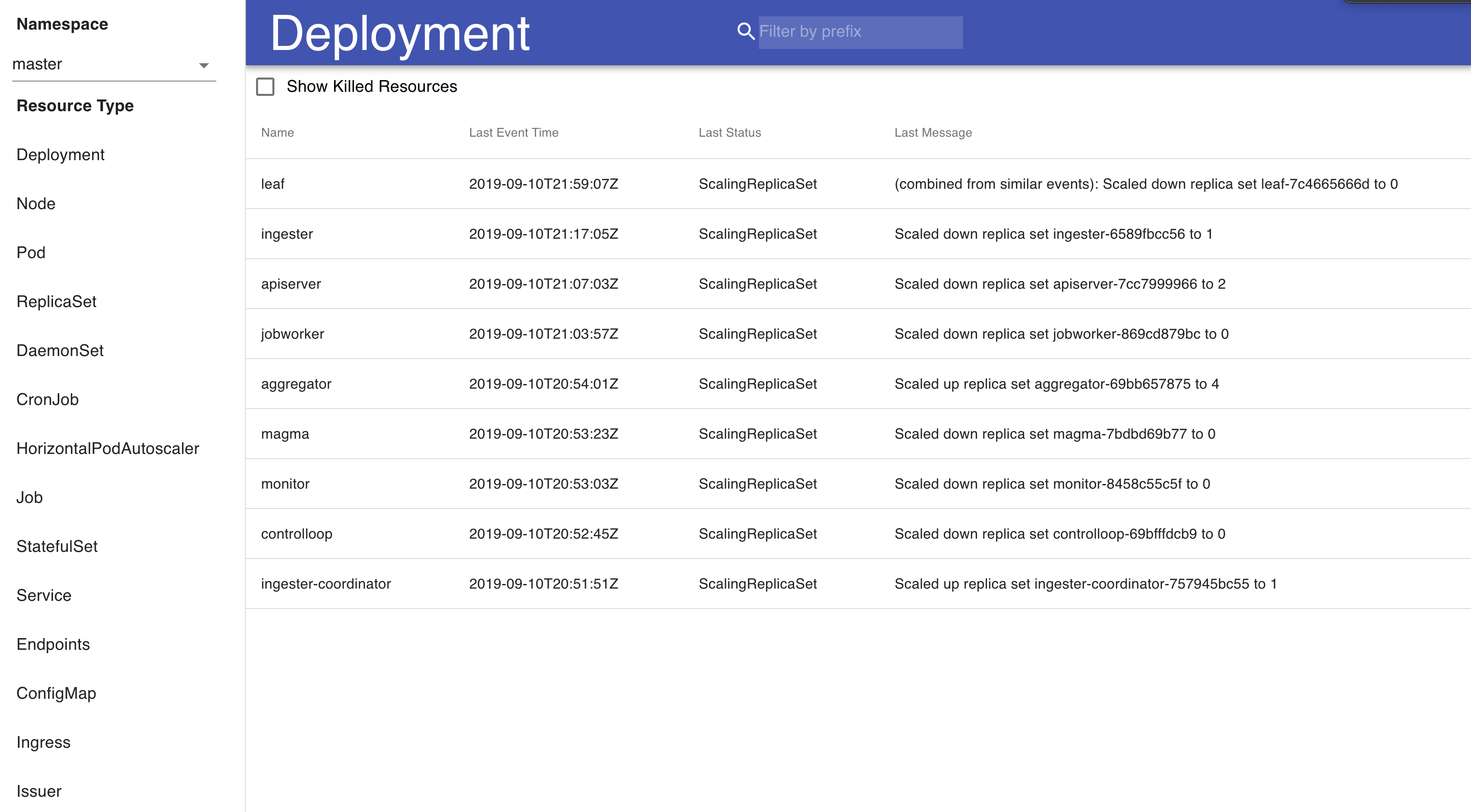

For visualizing occasions, we constructed a easy device that makes use of React that we use internally to look by and do some primary clustering of Kubernetes occasions and errors occurring in them. We’re releasing this dashboard into open supply and would like to see what the group would possibly use this for. There are two foremost features to the visualization of Kubernetes occasions. First is a high-level overview of the cluster at a per-resource granularity. This enables us to see a realtime occasion stream from our deployments and pods, and to see at what state each single useful resource in our Kubernetes system is. There’s additionally an choice to filter by namespace – as a result of sure units of providers run in their very own namespace, this permits us to drill down into a particular namespace to have a look at occasions.

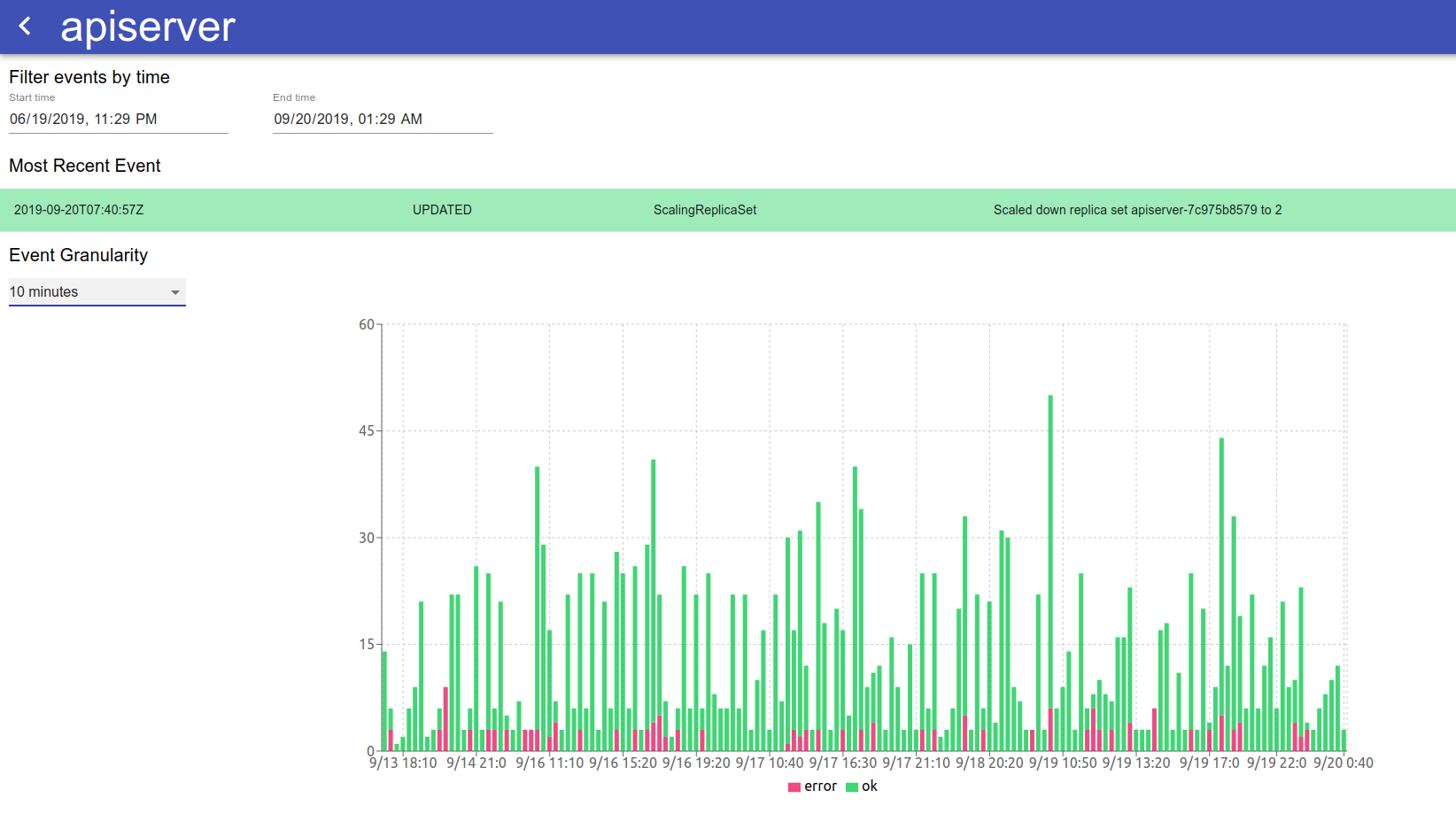

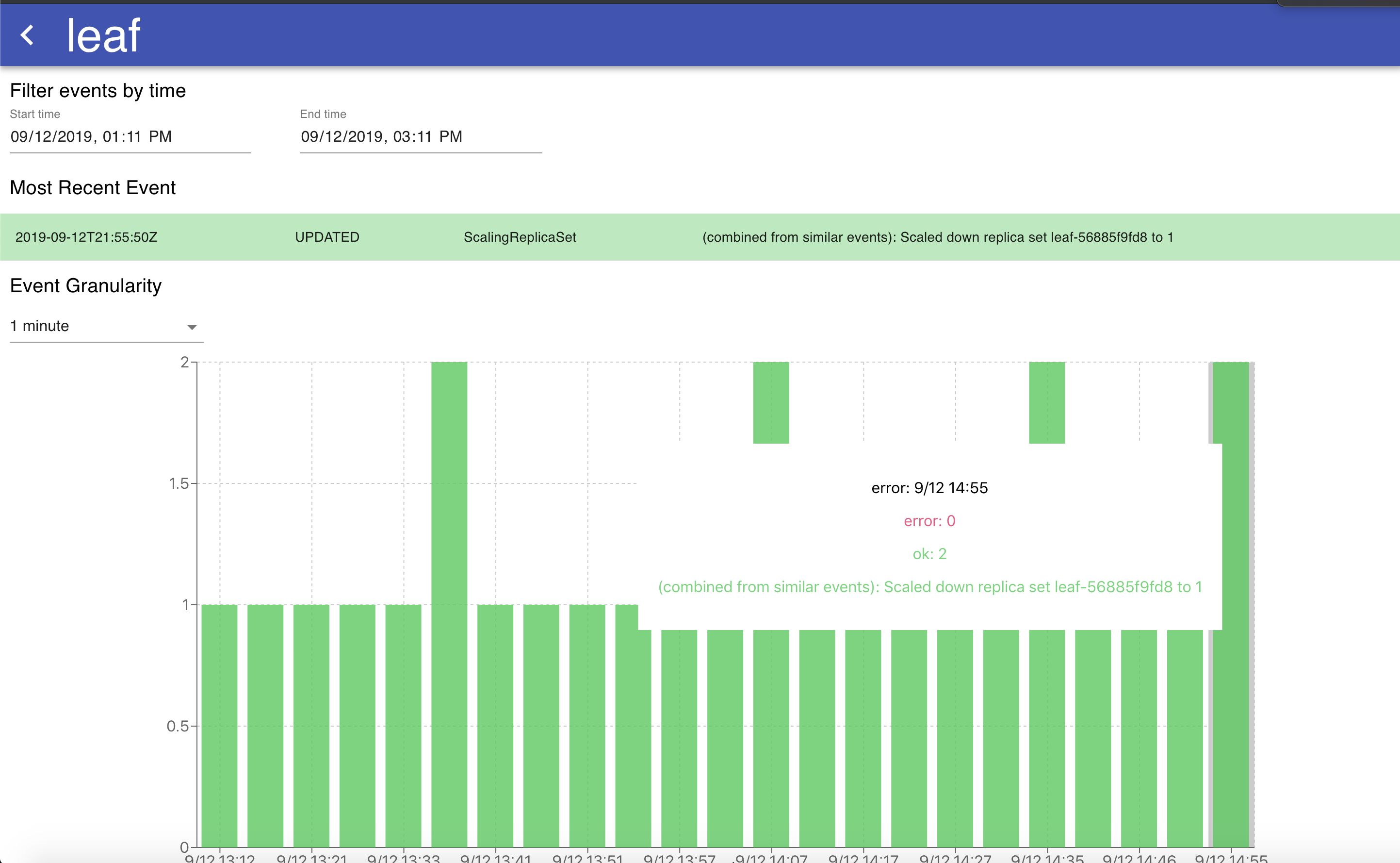

If we have an interest within the well being and state of any specific useful resource, every per-resource abstract is clickable and opens a web page with an in depth overview of the occasion logs of that useful resource, with a graph that exhibits the occasions and errors over time to supply a holistic image of how the useful resource is being managed.

The graph on this visualization has adjustable granularity, and the change in time vary permits for viewing the occasions for a given useful resource over any specified interval. Hovering over a particular bar on the stacked bar chart permits us to see the forms of errors occurring throughout that point interval for useful over-time analytics of what’s occurring to a particular useful resource. The desk of occasions listed beneath the graph is sorted by occasion time and in addition tells comprises the identical info because the graph – that’s, a chronological overview of all of the occasions that occurred to this particular k8s useful resource. The graph and desk are useful methods to know why a Kubernetes useful resource has been failing prior to now, and any traits over time that will accompany that failure (for instance, if it coincides with the discharge of a brand new microservice).

Conclusion

At present, we’re utilizing the real-time visualization of occasions to analyze our personal Kubernetes deployments in each improvement and manufacturing. This device and information supply permits us to see our deployments as they’re ongoing with out having to wrangle the kubectl interface to see what’s damaged and why. Moreover, this device is useful to get a retrospective look on previous incidents. For instance – if we spot transient points, we now have the facility to return in time and take a retrospective take a look at transient manufacturing points, discovering patterns of why it could have occurred, and what we are able to do to stop the incident from occurring once more sooner or later.

The power to entry historic Kubernetes occasion logs at tremendous granularity is a strong abstraction that gives us at Rockset a greater understanding of the state of our Kubernetes system than kubectl alone would permit us. This distinctive information supply and visualization permits us to observe our deployments and sources, in addition to take a look at points from a historic perspective. We’d love so that you can do that, and contribute to it in the event you discover it helpful in your individual environments!

Hyperlink: https://github.com/rockset/recipes/tree/grasp/k8s-event-visualization

[ad_2]