[ad_1]

Introduction

The Web of Issues (IoT) is producing an unprecedented quantity of knowledge. IBM estimates that annual IoT information quantity will attain roughly 175 zettabytes by 2025. That’s lots of of trillions of Gigabytes! In keeping with Cisco, if every Gigabyte in a Zettabyte had been a brick, 258 Nice Partitions of China might be constructed.

Actual time processing of IoT information unlocks its true worth by enabling companies to make well timed, data-driven choices. Nonetheless, the huge and dynamic nature of IoT information poses important challenges for a lot of organizations. At Databricks, we acknowledge these obstacles and supply a complete information intelligence platform to assist manufacturing organizations successfully course of and analyze IoT information. By leveraging the Databricks Knowledge Intelligence Platform, manufacturing organizations can rework their IoT information into actionable insights to drive effectivity, cut back downtime, and enhance general operational efficiency, with out the overhead of managing a fancy analytics system. On this weblog, we share examples of the way to use Databricks’ IoT analytics capabilities to create efficiencies in your enterprise.

Downside Assertion

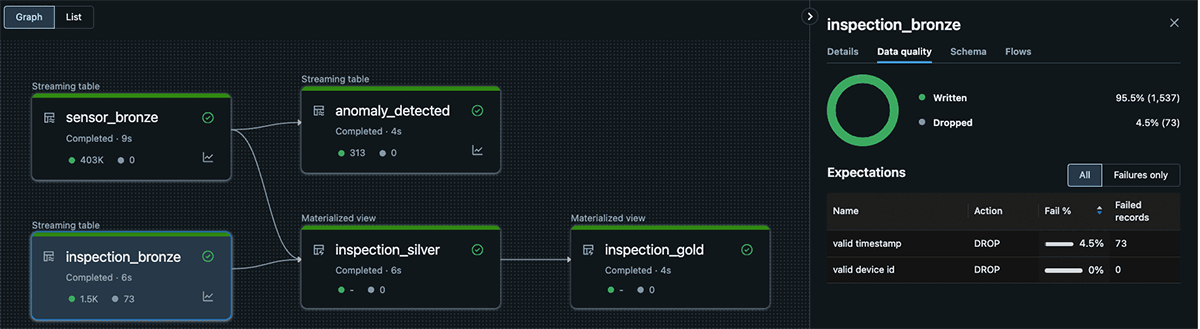

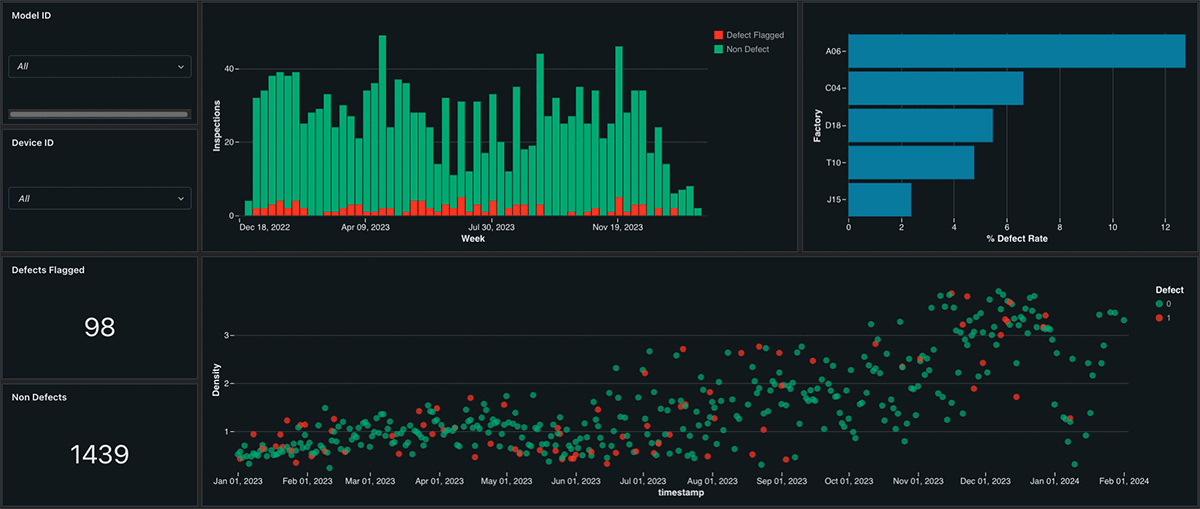

Whereas analyzing time collection information at scale and in real-time could be a important problem, Databricks’ Delta Reside Tables (DLT) gives a completely managed ETL answer, simplifying the operation of time collection pipelines and lowering the complexity of managing the underlying software program and infrastructure. DLT presents options equivalent to schema inference and information high quality enforcement, making certain that information high quality points are recognized with out permitting schema modifications from information producers to disrupt the pipelines. Databricks gives a easy interface for parallel computation of complicated time collection operations, together with exponential weighted transferring averages, interpolation, and resampling, through the open-source Tempo library. Furthermore, with Lakeview Dashboards, manufacturing organizations can acquire precious insights into how metrics, equivalent to defect charges by manufacturing unit, is likely to be impacting their backside line. Lastly, Databricks can notify stakeholders of anomalies in real-time by feeding the outcomes of our streaming pipeline into SQL alerts. Databricks’ progressive options assist manufacturing organizations overcome their information processing challenges, enabling them to make knowledgeable choices and optimize their operations.

Instance 1: Actual Time Knowledge Processing

Databricks’ unified analytics platform gives a sturdy answer for manufacturing organizations to deal with their information ingestion and streaming challenges. In our instance, we’ll create streaming tables that ingest newly landed information in real-time from a Unity Catalog Quantity, emphasizing a number of key advantages:

- Actual-Time Processing: Manufacturing organizations can course of information incrementally by using streaming tables, mitigating the price of reprocessing beforehand seen information. This ensures that insights are derived from the latest information out there, enabling faster decision-making.

- Schema Inference: Databricks’ Autoloader function runs schema inference, permitting flexibility in dealing with the altering schemas and information codecs from upstream producers that are all too widespread.

- Autoscaling Compute Sources: Delta Reside Tables presents autoscaling compute sources for streaming pipelines, making certain optimum useful resource utilization and cost-efficiency. Autoscaling is especially useful for IoT workloads the place the quantity of knowledge would possibly spike or plummet dramatically primarily based on seasonality and time of day.

- Precisely-As soon as Processing Ensures: Streaming on Databricks ensures that every row is processed precisely as soon as, eliminating the danger of pipelines creating duplicate or lacking information.

- Knowledge High quality Checks: DLT additionally presents information high quality checks, helpful for validating that values are inside sensible ranges or making certain major keys exist earlier than operating a be a part of. These checks assist preserve information high quality and permit for triggering warnings or dropping rows the place wanted.

Manufacturing organizations can unlock precious insights, enhance operational effectivity, and make data-driven choices with confidence by leveraging Databricks’ real-time information processing capabilities.

@dlt.desk(

title='inspection_bronze',

remark='Masses uncooked inspection information into the bronze layer'

) # Drops any rows the place timestamp or device_id are null, as these rows would not be usable for our subsequent step

@dlt.expect_all_or_drop({"legitimate timestamp": "`timestamp` will not be null", "legitimate machine id": "device_id will not be null"})

def autoload_inspection_data():

schema_hints = 'defect float, timestamp timestamp, device_id integer'

return (

spark.readStream.format('cloudFiles')

.choice('cloudFiles.format', 'csv')

.choice('cloudFiles.schemaHints', schema_hints)

.choice('cloudFiles.schemaLocation', 'checkpoints/inspection')

.load('inspection_landing')

)

Instance 2: Tempo for Time Collection Evaluation

Given streams from disparate information sources equivalent to sensors and inspection stories, we would must calculate helpful time collection options equivalent to exponential transferring common or pull collectively our occasions collection datasets. This poses a few challenges:

- How will we deal with null, lacking, or irregular information in our time collection?

- How will we calculate time collection options equivalent to exponential transferring common in parallel on a large dataset with out exponentially rising value?

- How will we pull collectively our datasets when the timestamps do not line up? On this case, our inspection defect warning would possibly get flagged hours after the sensor information is generated. We’d like a be a part of that enables “worth is true” guidelines, becoming a member of in the latest sensor information that doesn’t exceed the inspection timestamp. This fashion we will seize the options main as much as the defect warning, with out leaking information that arrived afterwards into our function set.

Every of those challenges would possibly require a fancy, customized library particular to time collection information. Fortunately, Databricks has executed the onerous half for you! We’ll use the open supply library Tempo from Databricks Labs to make these difficult operations easy. TSDF, Tempo’s time collection dataframe interface, permits us to interpolate lacking information with the imply from the encompassing factors, calculate an exponential transferring common for temperature, and do our “worth is true” guidelines be a part of, referred to as an as-of be a part of. For instance, in our DLT Pipeline:

@dlt.desk(

title='inspection_silver',

remark='Joins bronze sensor information with inspection stories'

)

def create_timeseries_features():

inspections = dlt.learn('inspection_bronze').drop('_rescued_data')

inspections_tsdf = TSDF(inspections, ts_col='timestamp', partition_cols=['device_id']) # Create our inspections TSDF

raw_sensors = (

dlt.learn('sensor_bronze')

.drop('_rescued_data') # Flip the signal when destructive in any other case maintain it the identical

.withColumn('air_pressure', when(col('air_pressure') < 0, -col('air_pressure'))

.in any other case(col('air_pressure')))

)

sensors_tsdf = (

TSDF(raw_sensors, ts_col='timestamp', partition_cols=['device_id', 'trip_id', 'factory_id', 'model_id'])

.EMA('rotation_speed', window=5) # Exponential transferring common over 5 rows

.resample(freq='1 hour', func='imply') # Resample into 1 hour intervals

)

return (

inspections_tsdf # Value is proper (as-of) be a part of!

.asofJoin(sensors_tsdf, right_prefix='sensor')

.df # Return the vanilla Spark Dataframe

.withColumnRenamed('sensor_trip_id', 'trip_id') # Rename some columns to match our schema

.withColumnRenamed('sensor_model_id', 'model_id')

.withColumnRenamed('sensor_factory_id', 'factory_id')

)Instance 3: Native Dashboarding and Alerting

As soon as we’ve outlined our DLT Pipeline we have to take motion on the offered insights. Databricks presents SQL Alerts, which might be configured to ship electronic mail, Slack, Groups, or generic webhook messages when sure circumstances in Streaming Tables are met. This enables manufacturing organizations to rapidly reply to points or alternatives as they come up. Moreover, Databricks’ Lakeview Dashboards present a user-friendly interface for aggregating and reporting on information, with out the necessity for extra licensing prices. These dashboards are instantly built-in into the Knowledge Intelligence Platform, making it simple for groups to entry and analyze information in actual time. Materialized Views and Lakehouse Dashboards are a successful mixture, pairing stunning visuals with on the spot efficiency:

Conclusion

General, Databricks’ DLT Pipelines, Tempo, SQL Alerts, and Lakeview Dashboards present a robust, unified function set for manufacturing organizations seeking to acquire real-time insights from their information and enhance their operational effectivity. By simplifying the method of managing and analyzing information, Databricks helps manufacturing organizations give attention to what they do finest: creating, transferring, and powering the world. With the difficult quantity, velocity, and selection necessities posed by IoT information, you want a unified information intelligence platform that democratizes information insights.

Get began as we speak with our answer accelerator for IoT Time Collection Evaluation!

[ad_2]