[ad_1]

What’s the quickest we will load knowledge into RocksDB? We had been confronted with this problem as a result of we needed to allow our prospects to shortly check out Rockset on their huge datasets. Despite the fact that the majority load of knowledge in LSM timber is a crucial matter, not a lot has been written about it. On this put up, we’ll describe the optimizations that elevated RocksDB’s bulk load efficiency by 20x. Whereas we needed to remedy attention-grabbing distributed challenges as nicely, on this put up we’ll concentrate on single node optimizations. We assume some familiarity with RocksDB and the LSM tree knowledge construction.

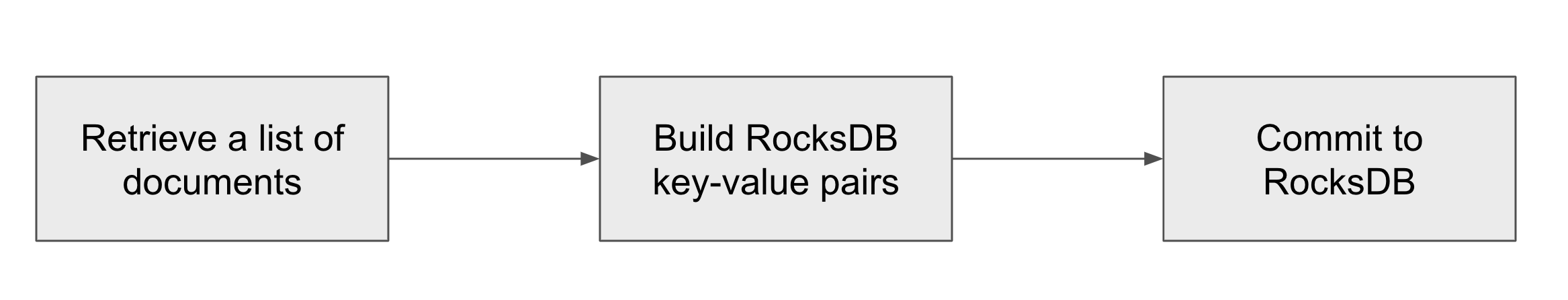

Rockset’s write course of comprises a few steps:

- In step one, we retrieve paperwork from the distributed log retailer. One doc symbolize one JSON doc encoded in a binary format.

- For each doc, we have to insert many key-value pairs into RocksDB. The subsequent step converts the record of paperwork into an inventory of RocksDB key-value pairs. Crucially, on this step, we additionally must learn from RocksDB to find out if the doc already exists within the retailer. If it does we have to replace secondary index entries.

- Lastly, we commit the record of key-value pairs to RocksDB.

We optimized this course of for a machine with many CPU cores and the place an affordable chunk of the dataset (however not all) matches in the primary reminiscence. Totally different approaches may work higher with small variety of cores or when the entire dataset matches into predominant reminiscence.

Buying and selling off Latency for Throughput

Rockset is designed for real-time writes. As quickly because the buyer writes a doc to Rockset, we have now to use it to our index in RocksDB. We don’t have time to construct an enormous batch of paperwork. It is a disgrace as a result of growing the scale of the batch minimizes the substantial overhead of per-batch operations. There isn’t any must optimize the person write latency in bulk load, although. Throughout bulk load we improve the scale of our write batch to lots of of MB, naturally resulting in the next write throughput.

Parallelizing Writes

In a daily operation, we solely use a single thread to execute the write course of. That is sufficient as a result of RocksDB defers a lot of the write processing to background threads via compactions. A few cores additionally must be out there for the question workload. In the course of the preliminary bulk load, question workload just isn’t necessary. All cores needs to be busy writing. Thus, we parallelized the write course of — as soon as we construct a batch of paperwork we distribute the batch to employee threads, the place every thread independently inserts knowledge into RocksDB. The necessary design consideration right here is to attenuate unique entry to shared knowledge buildings, in any other case, the write threads might be ready, not writing.

Avoiding Memtable

RocksDB gives a characteristic the place you’ll be able to construct SST recordsdata by yourself and add them to RocksDB, with out going via the memtable, referred to as IngestExternalFile(). This characteristic is nice for bulk load as a result of write threads don’t must synchronize their writes to the memtable. Write threads all independently kind their key-value pairs, construct SST recordsdata and add them to RocksDB. Including recordsdata to RocksDB is an inexpensive operation because it entails solely a metadata replace.

Within the present model, every write thread builds one SST file. Nevertheless, with many small recordsdata, our compaction is slower than if we had a smaller variety of larger recordsdata. We’re exploring an method the place we might kind key-value pairs from all write threads in parallel and produce one huge SST file for every write batch.

Challenges with Turning off Compactions

The commonest recommendation for bulk loading knowledge into RocksDB is to show off compactions and execute one huge compaction in the long run. This setup can also be talked about within the official RocksDB Efficiency Benchmarks. In spite of everything, the one motive RocksDB executes compactions is to optimize reads on the expense of write overhead. Nevertheless, this recommendation comes with two crucial caveats.

At Rockset we have now to execute one learn for every doc write – we have to do one main key lookup to verify if the brand new doc already exists within the database. With compactions turned off we shortly find yourself with hundreds of SST recordsdata and the first key lookup turns into the most important bottleneck. To keep away from this we constructed a bloom filter on all main keys within the database. Since we normally don’t have duplicate paperwork within the bulk load, the bloom filter permits us to keep away from costly main key lookups. A cautious reader will discover that RocksDB additionally builds bloom filters, however it does so per file. Checking hundreds of bloom filters continues to be costly.

The second downside is that the ultimate compaction is single-threaded by default. There’s a characteristic in RocksDB that allows multi-threaded compaction with choice max_subcompactions. Nevertheless, growing the variety of subcompactions for our remaining compaction doesn’t do something. With all recordsdata in stage 0, the compaction algorithm can not discover good boundaries for every subcompaction and decides to make use of a single thread as a substitute. We mounted this by first executing a priming compaction — we first compact a small variety of recordsdata with CompactFiles(). Now that RocksDB has some recordsdata in non-0 stage, that are partitioned by vary, it might decide good subcompaction boundaries and the multi-threaded compaction works like a appeal with all cores busy.

Our recordsdata in stage 0 aren’t compressed — we don’t need to decelerate our write threads and there’s a restricted profit of getting them compressed. Remaining compaction compresses the output recordsdata.

Conclusion

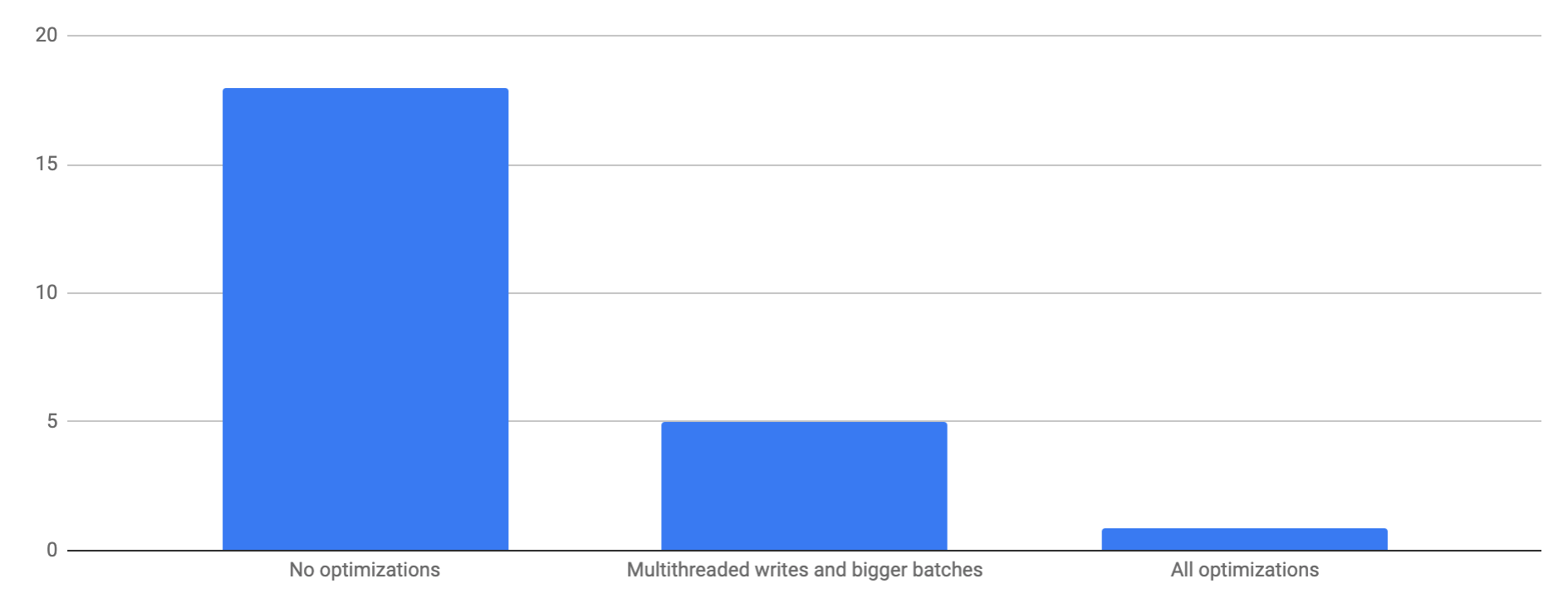

With these optimizations, we will load a dataset of 200GB uncompressed bodily bytes (80GB with LZ4 compression) in 52 minutes (70 MB/s) whereas utilizing 18 cores. The preliminary load took 35min, adopted by 17min of ultimate compaction. With not one of the optimizations the load takes 18 hours. By solely growing the batch dimension and parallelizing the write threads, with no adjustments to RocksDB, the load takes 5 hours. Be aware that every one of those numbers are measured on a single node RocksDB occasion. Rockset parallelizes writes on a number of nodes and might obtain a lot greater write throughput.

Bulk loading of knowledge into RocksDB could be modeled as a big parallel kind the place the dataset doesn’t match into reminiscence, with an extra constraint that we additionally must learn some a part of the info whereas sorting. There’s quite a lot of attention-grabbing work on parallel kind on the market and we hope to survey some strategies and check out making use of them in our setting. We additionally invite different RocksDB customers to share their bulk load methods.

I’m very grateful to everyone who helped with this challenge — our superior interns Jacob Klegar and Aditi Srinivasan; and Dhruba Borthakur, Ari Ekmekji and Kshitij Wadhwa.

Be taught extra about how Rockset makes use of RocksDB:

[ad_2]