[ad_1]

That is half two in Rockset’s Making Sense of Actual-Time Analytics on Streaming Information sequence. In half 1, we coated the expertise panorama for real-time analytics on streaming knowledge. On this submit, we’ll discover the variations between real-time analytics databases and stream processing frameworks. Within the coming weeks we’ll publish the next:

- Half 3 will supply suggestions for operationalizing streaming knowledge, together with a number of pattern architectures

Until you’re already acquainted with fundamental streaming knowledge ideas, please take a look at half 1 as a result of we’re going to imagine some stage of working information. With that, let’s dive in.

Differing Paradigms

Stream processing techniques and real-time analytics (RTA) databases are each exploding in recognition. Nevertheless, it’s troublesome to speak about their variations by way of “options”, as a result of you should utilize both for nearly any related use case. It’s simpler to speak concerning the completely different approaches they take. This weblog will make clear some conceptual variations, present an outline of well-liked instruments, and supply a framework for deciding which instruments are finest suited to particular technical necessities.

Let’s begin with a fast abstract of each stream processing and RTA databases. Stream processing techniques can help you mixture, filter, be a part of, and analyze streaming knowledge. “Streams”, versus tables in a relational database context, are the first-class residents in stream processing. Stream processing approximates one thing like a steady question; every occasion that passes via the system is analyzed in line with pre-defined standards and might be consumed by different techniques. Stream processing techniques are hardly ever used as persistent storage. They’re a “course of”, not a “retailer”, which brings us to…

Actual-time analytics databases are incessantly used for persistent storage (although there are exceptions) and have a bounded context somewhat than an unbounded context. These databases can ingest streaming occasions, index the info, and allow millisecond-latency analytical queries in opposition to that knowledge. Actual-time analytics databases have plenty of overlap with stream processing; they each allow you to mixture, filter, be a part of, and analyze excessive volumes streaming knowledge to be used instances like anomaly detection, personalization, logistics, and extra. The most important distinction between RTA databases and stream processing instruments is that databases present persistent storage, bounded queries, and indexing capabilities.

So do you want only one? Each? Let’s get into the main points.

Stream Processing…How Does It Work?

Stream processing instruments manipulate streaming knowledge because it flows via a streaming knowledge platform (Kafka being probably the most well-liked choices, however there are others). This processing occurs incrementally, because the streaming knowledge arrives.

Stream processing techniques usually make use of a directed acyclic graph (DAG), with nodes which can be chargeable for completely different capabilities, corresponding to aggregations, filtering, and joins. The nodes work in a daisy-chain trend. Information arrives, it hits one node and is processed, after which passes the processed knowledge to the subsequent node. This continues till the info has been processed in line with predefined standards, known as a topology. Nodes can stay on completely different servers, linked by a community, as a method to scale horizontally to deal with large volumes of knowledge. That is what’s meant by a “steady question”. Information is available in, it’s reworked, and its outcomes are generated constantly. When the processing is full, different functions or techniques can subscribe to the processed stream and use it for analytics or inside an software or service. One extra observe: whereas many stream processing platforms help declarative languages like SQL, additionally they help Java, Scala, or Python, that are applicable for superior use instances like machine studying.

Stateful Or Not?

Stream processing operations can both be stateless or stateful. Stateless stream processing is way less complicated. A stateless course of doesn’t rely contextually on something that got here earlier than it. Think about an occasion containing buy info. If in case you have a stream processor filtering out any buy under $50, that operation is unbiased of different occasions, and subsequently stateless.

Stateful stream processing takes into consideration the historical past of the info. Every incoming merchandise relies upon not solely by itself content material, however on the content material of the earlier merchandise (or a number of earlier objects). State is required for operations like working totals in addition to extra complicated operations that be a part of knowledge from one stream to a different.

For instance, contemplate an software that processes a stream of sensor knowledge. For example that the appliance must compute the common temperature for every sensor over a particular time window. On this case, the stateful processing logic would wish to take care of a working whole of the temperature readings for every sensor, in addition to a rely of the variety of readings which were processed for every sensor. This info could be used to compute the common temperature for every sensor over the required time interval or window.

These state designations are associated to the “steady question” idea that we mentioned within the introduction. While you question a database, you’re querying the present state of its contents. In stream processing, a steady, stateful question requires sustaining state individually from the DAG, which is completed by querying a state retailer, i.e. an embedded database inside the framework. State shops can reside in reminiscence, on disk, or in deep storage, and there’s a latency / value tradeoff for every.

Stateful stream processing is kind of complicated. Architectural particulars are past the scope of this weblog, however listed here are 4 challenges inherent in stateful stream processing:

- Managing state is dear: Sustaining and updating the state requires vital processing sources. The state have to be up to date for every incoming knowledge merchandise, and this may be troublesome to do effectively, particularly for high-throughput knowledge streams.

- It’s robust to deal with out-of-order knowledge: that is an absolute should for all stateful stream processing. If knowledge arrives out of order, the state must be corrected and up to date, which provides processing overhead.

- Fault tolerance takes work: Vital steps have to be taken to make sure knowledge just isn’t misplaced or corrupted within the occasion of a failure. This requires sturdy mechanisms for checkpointing, state replication, and restoration.

- Debugging and testing is difficult: The complexity of the processing logic and stateful context could make reproducing and diagnosing errors in these techniques troublesome. A lot of that is because of the distributed nature of stream processing techniques – a number of parts and a number of knowledge sources make root trigger evaluation a problem.

Whereas stateless stream processing has worth, the extra fascinating use instances require state. Coping with state makes stream processing instruments tougher to work with than RTA databases.

The place Do I Begin With Processing Instruments?

Up to now few years, the variety of obtainable stream processing techniques has grown considerably. This weblog will cowl a number of of the large gamers, each open supply and absolutely managed, to present readers a way of what’s obtainable

Apache Flink

Apache Flink is an open-source, distributed framework designed to carry out real-time stream processing. It was developed by the Apache Software program Basis and is written in Java and Scala. Flink is among the extra well-liked stream processing frameworks because of its flexibility, efficiency, and group (Lyft, Uber, and Alibaba are all customers, and the open-source group for Flink is kind of energetic). It helps all kinds of knowledge sources and programming languages, and – in fact – helps stateful stream processing.

Flink makes use of a dataflow programming mannequin that permits it to research streams as they’re generated, somewhat than in batches. It depends on checkpoints to accurately course of knowledge even when a subset of nodes fail. That is potential as a result of Flink is a distributed system, however beware that its structure requires appreciable experience and operational repairs to tune, keep, and debug.

Apache Spark Streaming

Spark Streaming is one other well-liked stream processing framework, can also be open supply, and is acceptable for top complexity, high-volume use instances.

Not like Flink, Spark Streaming makes use of a micro-batch processing mannequin, the place incoming knowledge is processed in small, fixed-size batches. This leads to larger end-to-end latencies. As for fault tolerance, Spark Streaming makes use of a mechanism known as “RDD lineage” to get well from failures, which may typically trigger vital overhead in processing time. There’s help for SQL via the Spark SQL library, nevertheless it’s extra restricted than different stream processing libraries, so double verify that it may possibly help your use case. Then again, Spark Streaming has been round longer than different techniques, which makes it simpler to search out finest practices and even free, open-source code for frequent use instances.

Confluent Cloud and ksqlDB

As of at present, Confluent Cloud’s main stream processing providing is ksqlDB, which mixes KSQL’s acquainted SQL-esque syntax with extra options corresponding to connectors, a persistent question engine, windowing, and aggregation.

One vital characteristic of ksqlDB is that it’s a fully-managed service, which makes it less complicated to deploy and scale. Distinction this to Flink, which might be deployed in quite a lot of configurations, together with as a standalone cluster, on YARN, or on Kubernetes (observe that there are additionally fully-managed variations of Flink). ksqlDB helps a SQL-like question language, offers a variety of built-in capabilities and operators, and will also be prolonged with customized user-defined capabilities (UDFs) and operators. ksqlDB can also be tightly built-in with the Kafka ecosystem and is designed to work seamlessly with Kafka streams, matters, and brokers.

However The place Will My Information Stay?

Actual-time analytics (RTA) databases are categorically completely different than stream processing techniques. They belong to a definite and rising trade, and but have some overlap in performance. For an outline on what we imply by “RTA database”, take a look at this primer.

Within the context of streaming knowledge, RTA databases are used as a sink for streaming knowledge. They’re equally helpful for real-time analytics and knowledge functions, however they serve up knowledge once they’re queried, somewhat than constantly. While you ingest knowledge into an RTA database, you’ve gotten the choice to configure ingest transformations, which may do issues like filter, mixture, and in some instances be a part of knowledge constantly. The info resides in a desk, which you can not “subscribe” to the identical method you may with streams.

In addition to the desk vs. stream distinction, one other vital characteristic of RTA databases is their skill to index knowledge; stream processing frameworks index very narrowly, whereas RTA databases have a big menu of choices. Indexes are what enable RTA databases to serve millisecond-latency queries, and every kind of index is optimized for a specific question sample. One of the best RTA database for a given use case will usually come right down to indexing choices. In the event you’re trying to execute extremely quick aggregations on historic knowledge, you’ll possible select a column-oriented database with a main index. Seeking to lookup knowledge on a single order? Select a database with an inverted index. The purpose right here is that each RTA database makes completely different indexing selections. One of the best answer will rely in your question patterns and ingest necessities.

One remaining level of comparability: enrichment. In equity, you may enrich streaming knowledge with extra knowledge in a stream processing framework. You may primarily “be a part of” (to make use of database parlance) two streams in actual time. Interior joins, left or proper joins, and full outer joins are all supported in stream processing. Relying on the system, you may as well question the state to affix historic knowledge with stay knowledge. Simply know that this may be troublesome; there are a lot of tradeoffs to be made round value, complexity, and latency. RTA databases, alternatively, have less complicated strategies for enriching or becoming a member of knowledge. A typical technique is denormalizing, which is basically flattening and aggregating two tables. This technique has its points, however there are different choices as properly. Rockset, for instance, is ready to carry out inside joins on streaming knowledge at ingest, and any kind of be a part of at question time.

The upshot of RTA databases is that they allow customers to execute complicated, millisecond-latency queries in opposition to knowledge that’s 1-2 seconds previous. Each stream processing frameworks and RTA databases enable customers to remodel and serve knowledge. They each supply the power to counterpoint, mixture, filter, and in any other case analyze streams in actual time.

Let’s get into three well-liked RTA databases and consider their strengths and weaknesses.

Elasticsearch

Elasticsearch is an open-source, distributed search database that means that you can retailer, search, and analyze massive volumes of knowledge in close to real-time. It’s fairly scalable (with work and experience), and generally used for log evaluation, full-text search, and real-time analytics.

In an effort to enrich streaming knowledge with extra knowledge in Elasticsearch, you should denormalize it. This requires aggregating and flattening knowledge earlier than ingestion. Most stream processing instruments don’t require this step. Elasticsearch customers usually see high-performance for real-time analytical queries on textual content fields. Nevertheless, if Elasticsearch receives a excessive quantity of updates, efficiency degrades considerably. Moreover, when an replace or insert happens upstream, Elasticsearch has to reindex that knowledge for every of its replicas, which consumes compute sources. Many streaming knowledge use instances are append solely, however many usually are not; contemplate each your replace frequency and denormalization earlier than selecting Elasticsearch.

Apache Druid

Apache Druid is a high-performance, column-oriented, knowledge retailer that’s designed for sub-second analytical queries and real-time knowledge ingestion. It’s historically often known as a timeseries database, and excels at filtering and aggregations. Druid is a distributed system, usually utilized in massive knowledge functions. It’s identified for each efficiency and being tough to operationalize.

In terms of transformations and enrichment, Druid has the identical denormalization challenges as Elasticsearch. In the event you’re relying in your RTA database to affix a number of streams, contemplate dealing with these operations elsewhere; denormalizing is a ache. Updates current an analogous problem. If Druid ingests an replace from streaming knowledge, it should reindex all knowledge within the affected phase, which is a subset of knowledge comparable to a time vary. This introduces each latency and compute value. In case your workload is update-heavy, contemplate selecting a unique RTA database for streaming knowledge. Lastly, It’s price noting that there are some SQL options that aren’t supported by Druid’s question language, corresponding to subqueries, correlated queries, and full outer joins.

Rockset

Rockset is a fully-managed real-time analytics database constructed for the cloud – there’s nothing to handle or tune. It permits millisecond-latency, analytical queries utilizing full-featured SQL. Rockset is properly suited to all kinds of question patterns because of its Converged Index(™), which mixes a column index, a row index, and a search index. Rockset’s customized SQL question optimizer robotically analyzes every question and chooses the suitable index primarily based on the quickest question plan. Moreover, its structure permits for full isolation of compute used for ingesting knowledge and compute used for querying knowledge (extra element right here).

In terms of transformations and enrichment, Rockset has most of the identical capabilities as stream processing frameworks. It helps becoming a member of streams at ingest (inside joins solely), enriching streaming knowledge with historic knowledge at question time, and it completely obviates denormalization. In actual fact, Rockset can ingest and index schemaless occasions knowledge, together with deeply nested objects and arrays. Rockset is a absolutely mutable database, and might deal with updates with out efficiency penalty. If ease of use and value / efficiency are vital elements, Rockset is a perfect RTA database for streaming knowledge. For a deeper dive on this matter, take a look at this weblog.

Wrapping Up

Stream processing frameworks are properly suited to enriching streaming knowledge, filtering and aggregations, and superior use instances like picture recognition and pure language processing. Nevertheless, these frameworks usually are not usually used for persistent storage and have solely fundamental help for indexes – they usually require an RTA database for storing and querying knowledge. Additional, they require vital experience to arrange, tune, keep, and debug. Stream processing instruments are each highly effective and excessive upkeep.

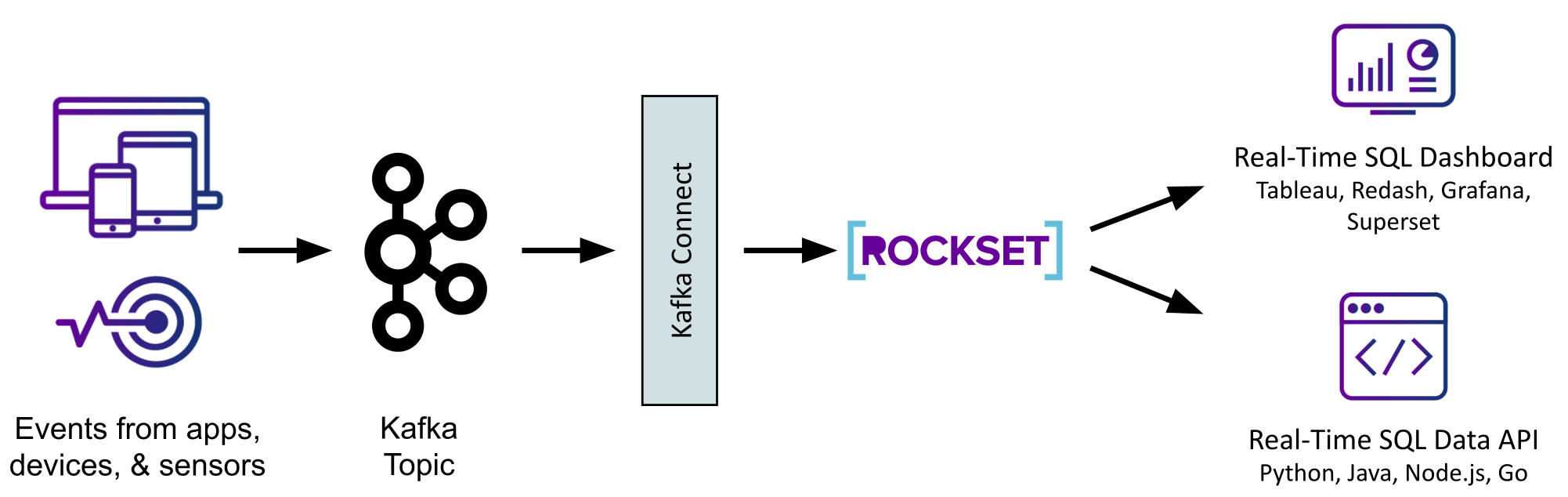

RTA databases are excellent stream processing sinks. Their help for high-volume ingest and indexing allow sub-second analytical queries on real-time knowledge. Connectors for a lot of different frequent knowledge sources, like knowledge lakes, warehouses, and databases, enable for a broad vary of enrichment capabilities. Some RTA databases, like Rockset, additionally help streaming joins, filtering, and aggregations at ingest.

The following submit within the sequence will clarify the best way to operationalize RTA databases for superior analytics on streaming knowledge. Within the meantime, in the event you’d wish to get good on Rockset’s real-time analytics database, you can begin a free trial proper now. We offer $300 in credit and don’t require a bank card quantity. We even have many pattern knowledge units that mimic the traits of streaming knowledge. Go forward and kick the tires.

[ad_2]